Search has come a long way.

We used to Press Enter in blind faith, hoping we’d find our treasure. If not, we’d add or remove a word, or rephrase the query entirely, and click Enter again. And again. And don’t talk about typos – for those of us who were not native speakers or with bad typing or spelling skills, we’d just give up.

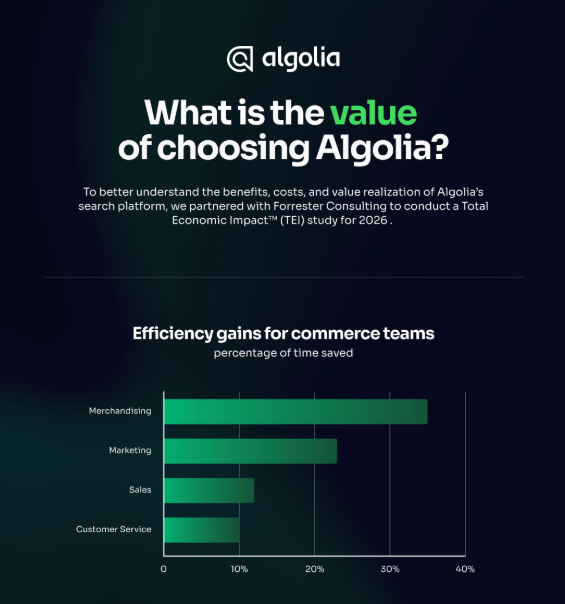

Well, search changed a few years back when SaaS (Search-as-a-Service) companies hit the market and showed us how search ought to be: fast and relevant; as you type instant results; vibrant UI/UX; facets and filtering.

Gone was the Enter key.

And SaaS technologies continued to advance, adding more product detail, customer reviews and ratings, multiple photos, recommendations, and merchandising – with even more blazing speeds and relevance, framed within a far easier navigation.

Search has now become essential to internet shopping, and profitable for competitive online businesses.

But again, search has come a long way, reaching a new milestone: question answering and knowledge. Take a look at this:

With knowledge, we no longer have to leave the search screen. We can stay and ask more questions, receive multiple answers, click on related questions, and read a summary of the topic with links to go further.

All with a single query. Or with voice, or images. (Not yet thought.)

And this is only the beginning. GPT is right around the corner. Search is no longer the same.

Business is no longer the same. All companies, large or small, in any field – ecommerce, media, travel — can implement question answering and knowledge panels as a part of their knowledge management and digital platforms.

Why has presenting knowledge alongside search results changed everything?

The importance of knowledge

In the good old days, alchemists worked in secrecy, greedily concealing their knowledge from others. What they failed to understand was that their knowledge (right or wrong) was more golden than the gold they failed to create.

They didn’t appreciate that sharing knowledge advances human understanding and performance.

Knowledge is an asset. Companies that understand the monetary and competitive value of knowledge learn to free up their data and personalize it for their employees, business partners, and customers.

Hence, the importance of knowledge management.

Where to start with knowledge management? The search bar.

The starting point for any successful and truly useful knowledge management system is to understand what people need while using such a system. And there’s no better place to start than at the beginning, where the knowledge is first obtained: the search interface.

Consider three search use cases that knowledge management can address:

- Classic Search: Searching for a particular person, place, or thing, to do something immediately with it, such as buy (ecommerce), play (media streaming), or read.

- Questions & Answers: Looking to find answers to precise questions.

- Knowledge: Looking for information to help you perform a task, browse, research, make decisions, interact with others, learn something new, and so on. Essentially, looking to improve your brain, performance, and decision-making.

*Note that semantic search is missing from this list. That’s because semantic search, like any AI search, such as NLP, NLU, LMs, and GPT, are not use cases but search technologies that can be used in all three of the above use cases. We will discuss semantic search as we go along.

So, what kind of search interface could satisfy all three distinct use cases?

Let’s combine the intentions behind all these use case into one phrase:

Users need access to all available, necessary, and useful information, and in the right format, to satisfy their immediate intentions.

Quite dense, this phrase. How’s this:

People need a search interface that provides them with the information they need with only a few search terms.

When we think of such a “system”, we often jump to the “information” part and ask: How can we combine our many separate data sources and different formats and processes? This takes us into system architecture, data science, and application programming. And rightly so. But it skips the essential point – the why – Why do we need knowledge management?

By starting with the end-user experience – access to information by searching – we place the focus on finding and using knowledge.

In other words, it’s all about being able to find the best and most useful information. And digital finding starts with a search bar or a voice assistant — or with what we call federated search.

What is federated search in the context of knowledge management

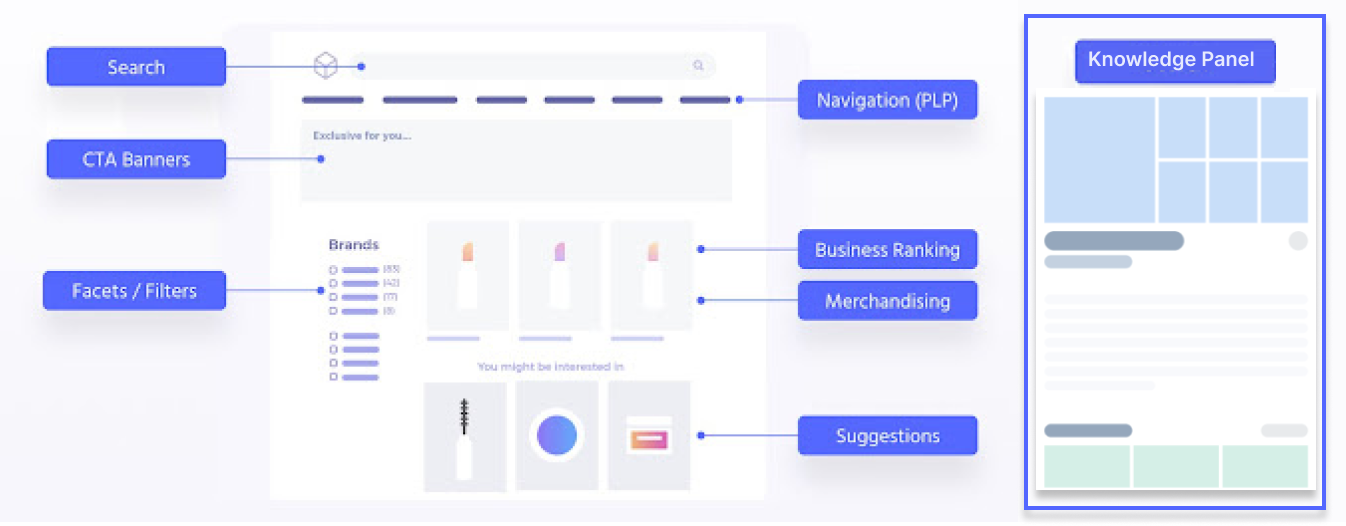

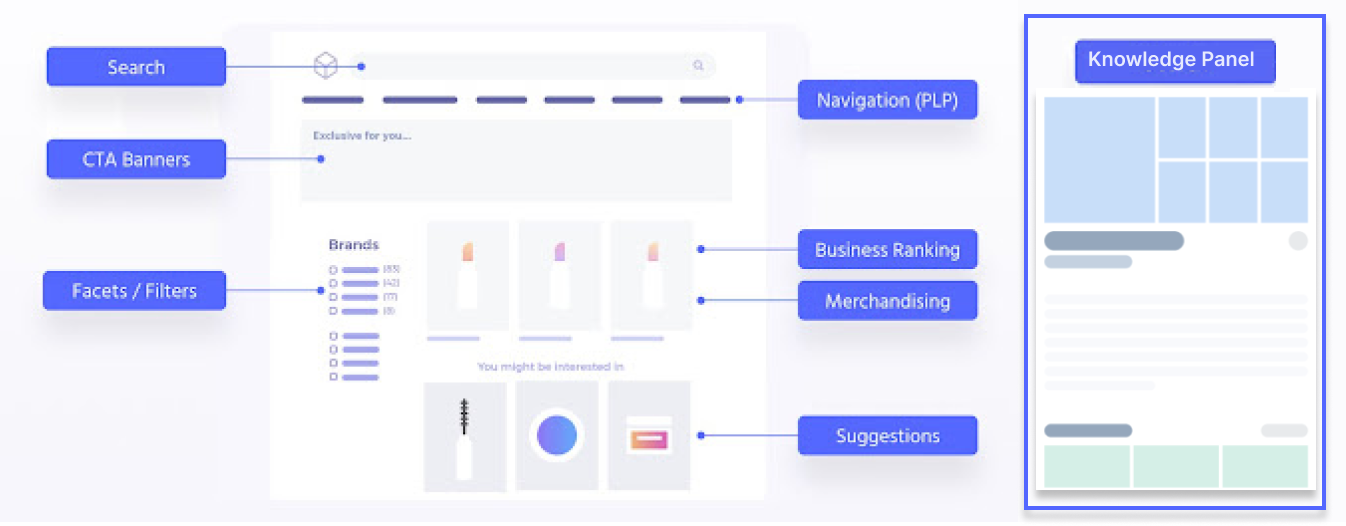

Federated search, or multi-dimensional search, is the display of distinct pieces of information carefully placed on a single search interface. It gives the user a variety of information and options with every query. Take a look at the following schema:

The general idea is that a single query displays a variety of information, such as standard results, hyperlinks, facets and filters, menus, merchandising and recommendations, and a larger “knowledge panel” that provides a fairly complete answer to a question or a summary of some related topic.

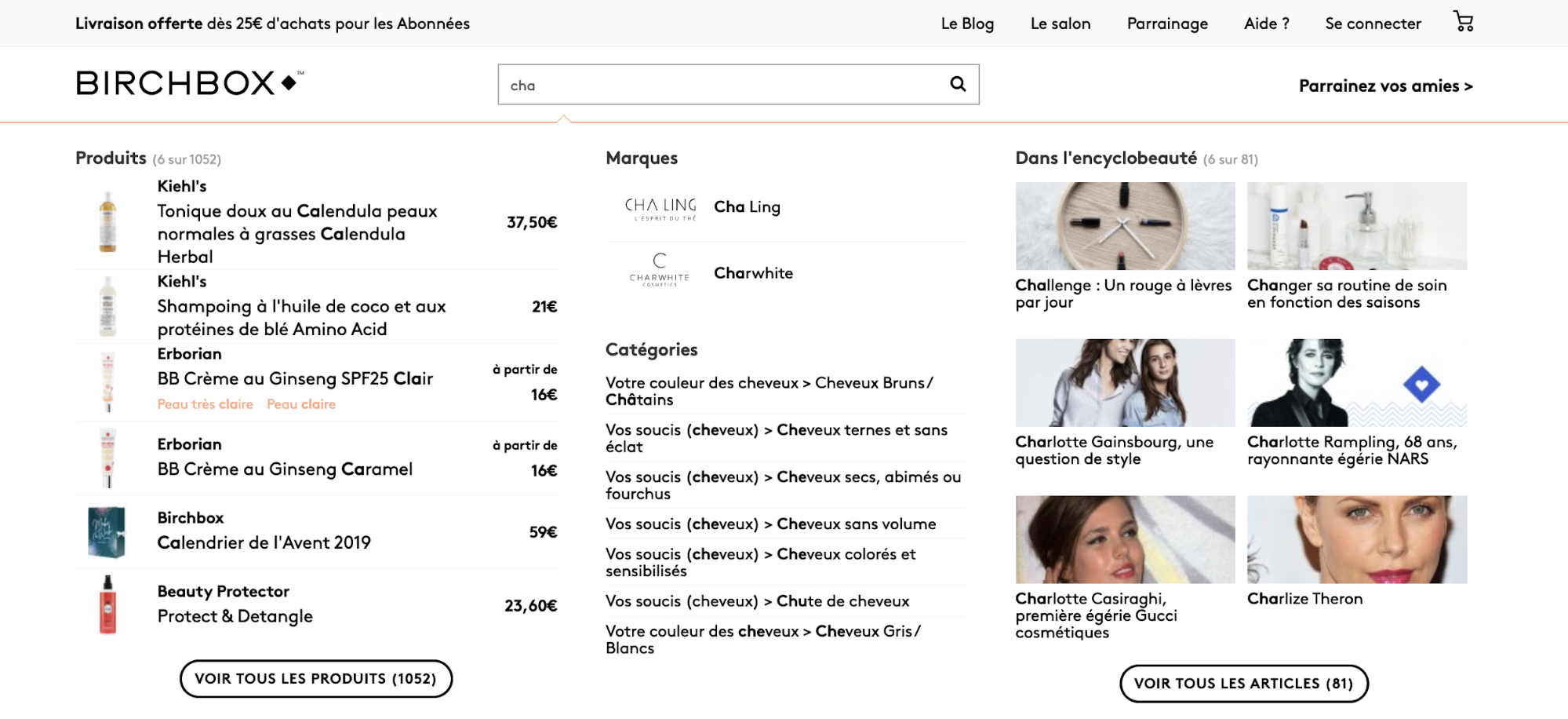

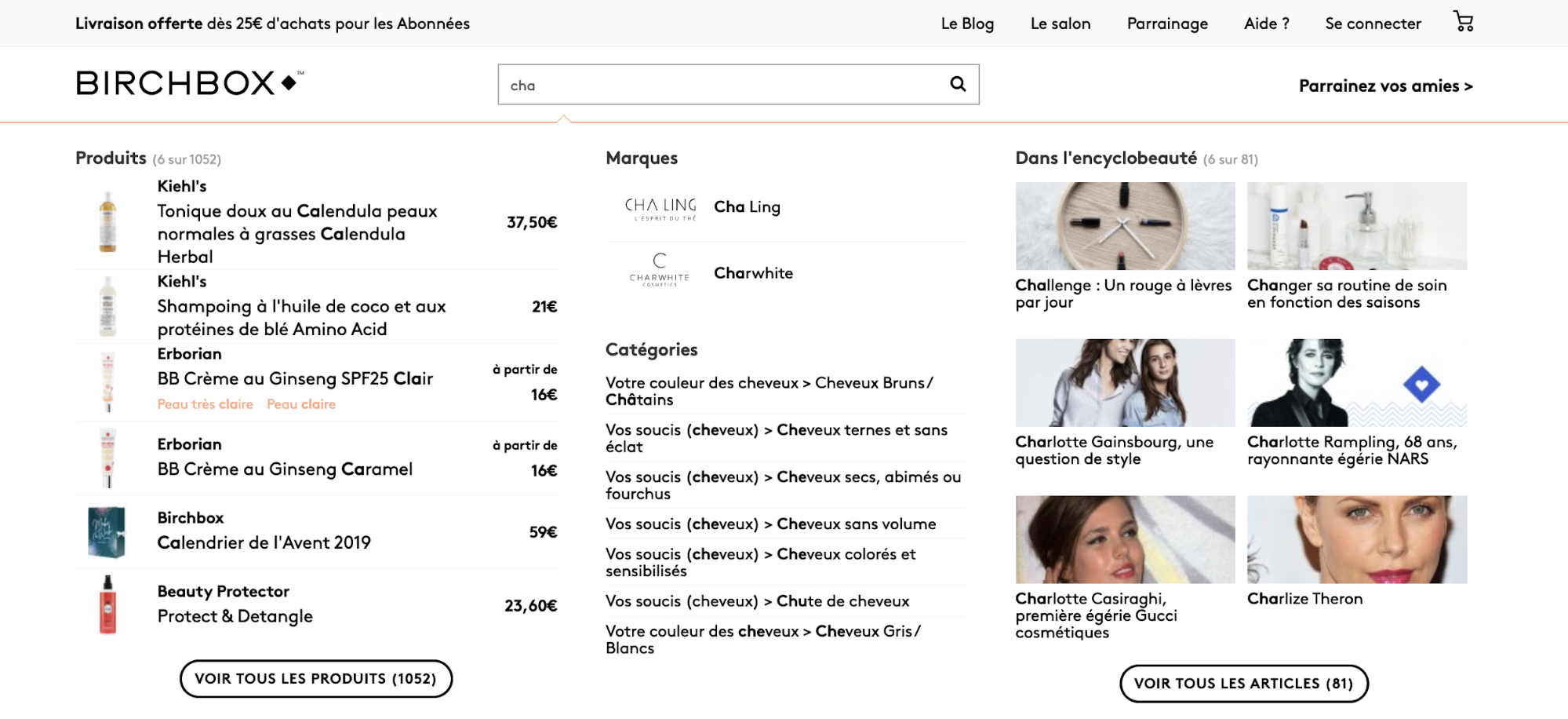

We can see federated search in action on Birchbox’s search page. Note the product listing, facets, categories, and an “Encyclopedia” of FAQs and articles on the right:

The essence of a sort of knowledge management ecommerce is that a variety of relevant information can be found so easily and quickly.

In the remaining two sections, we’ll look closely at:

- Examples of front end of knowledge management

- The back end processes that generate knowledge from a variety of datasources

The front end – knowledge management on the federated search interface

As a reminder, there are three use cases:

- Classic Search

- Questions & Answers

- Knowledge Content

The ecommerce use case – combining Classic Search with Content

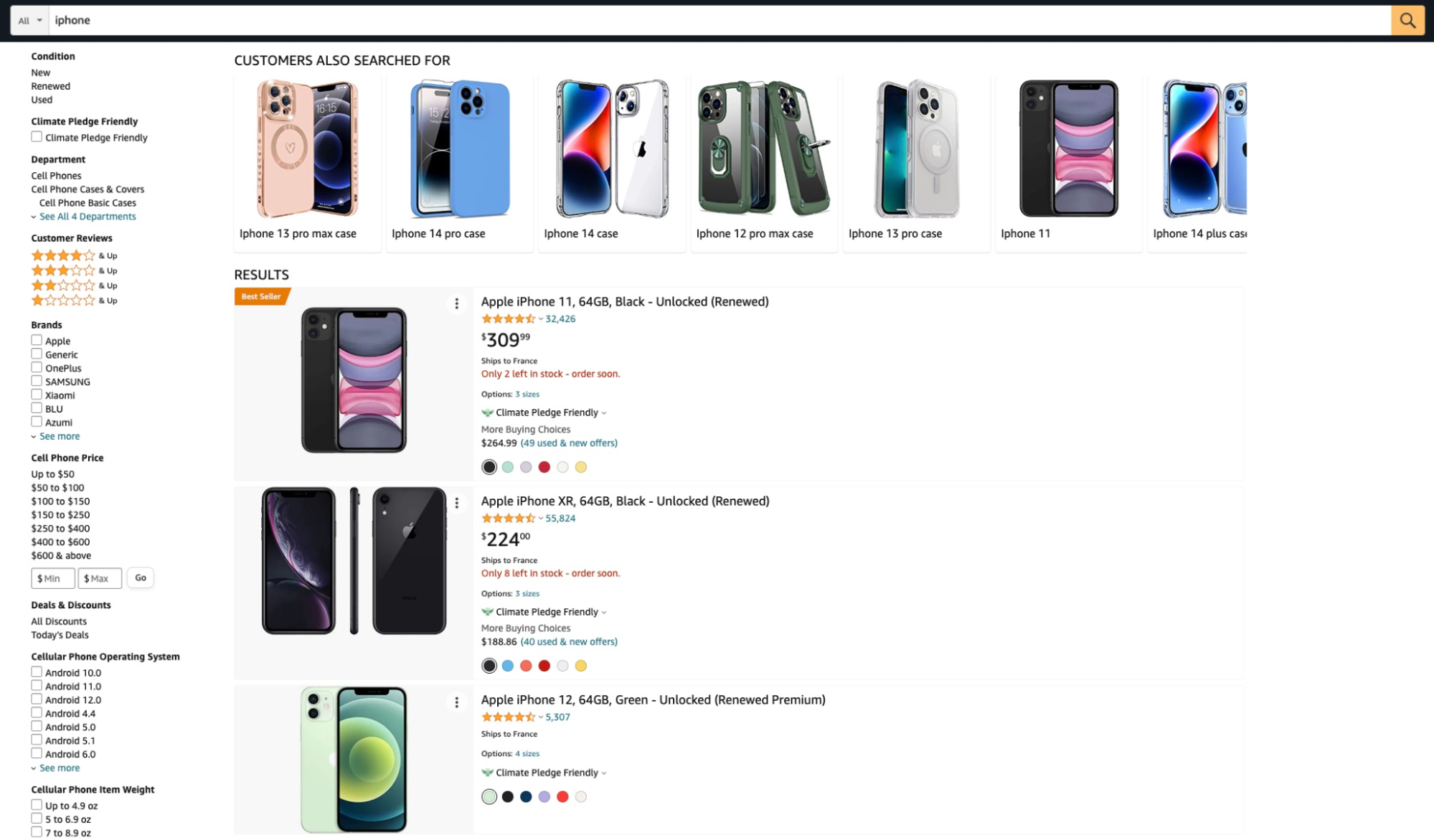

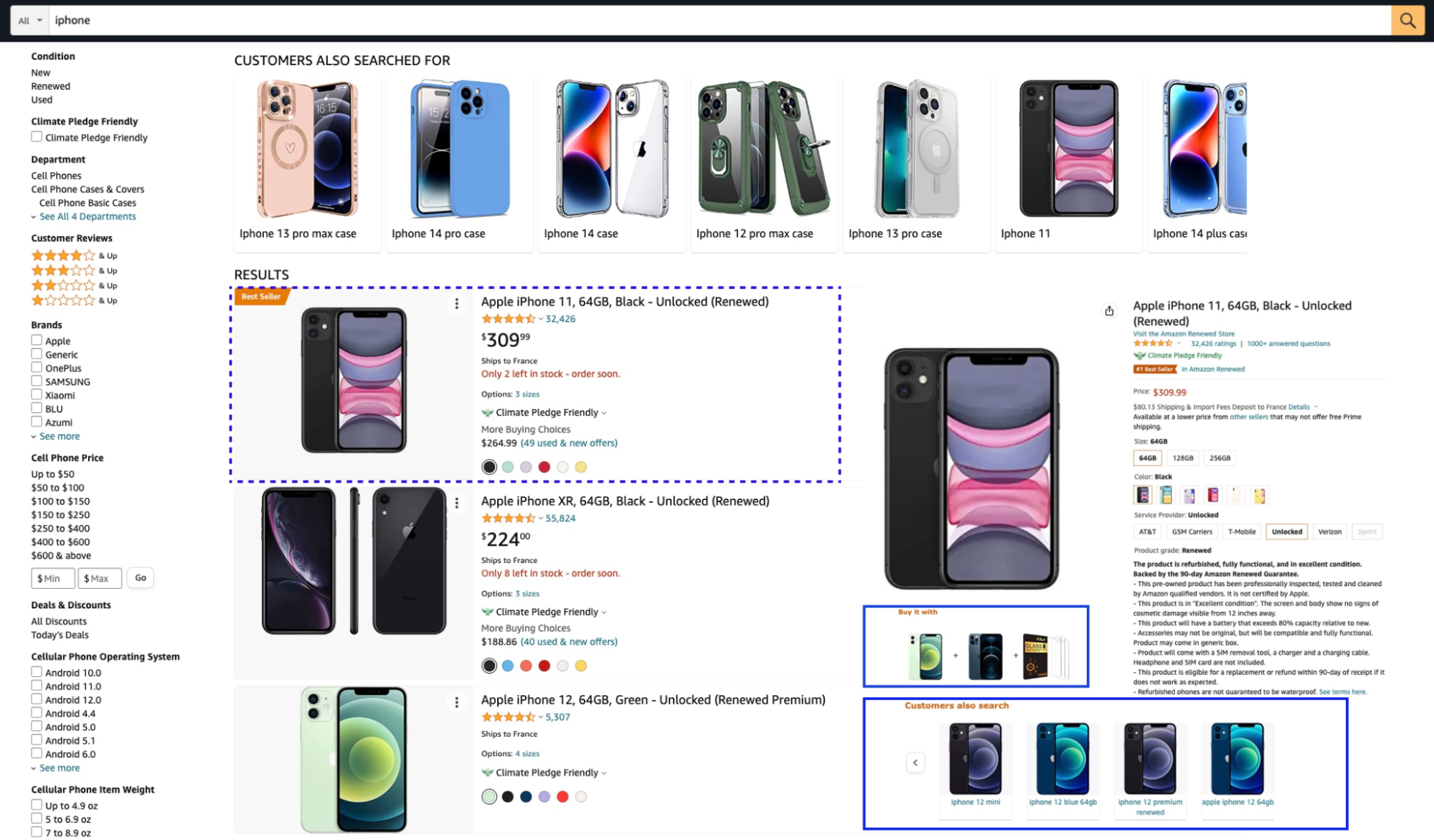

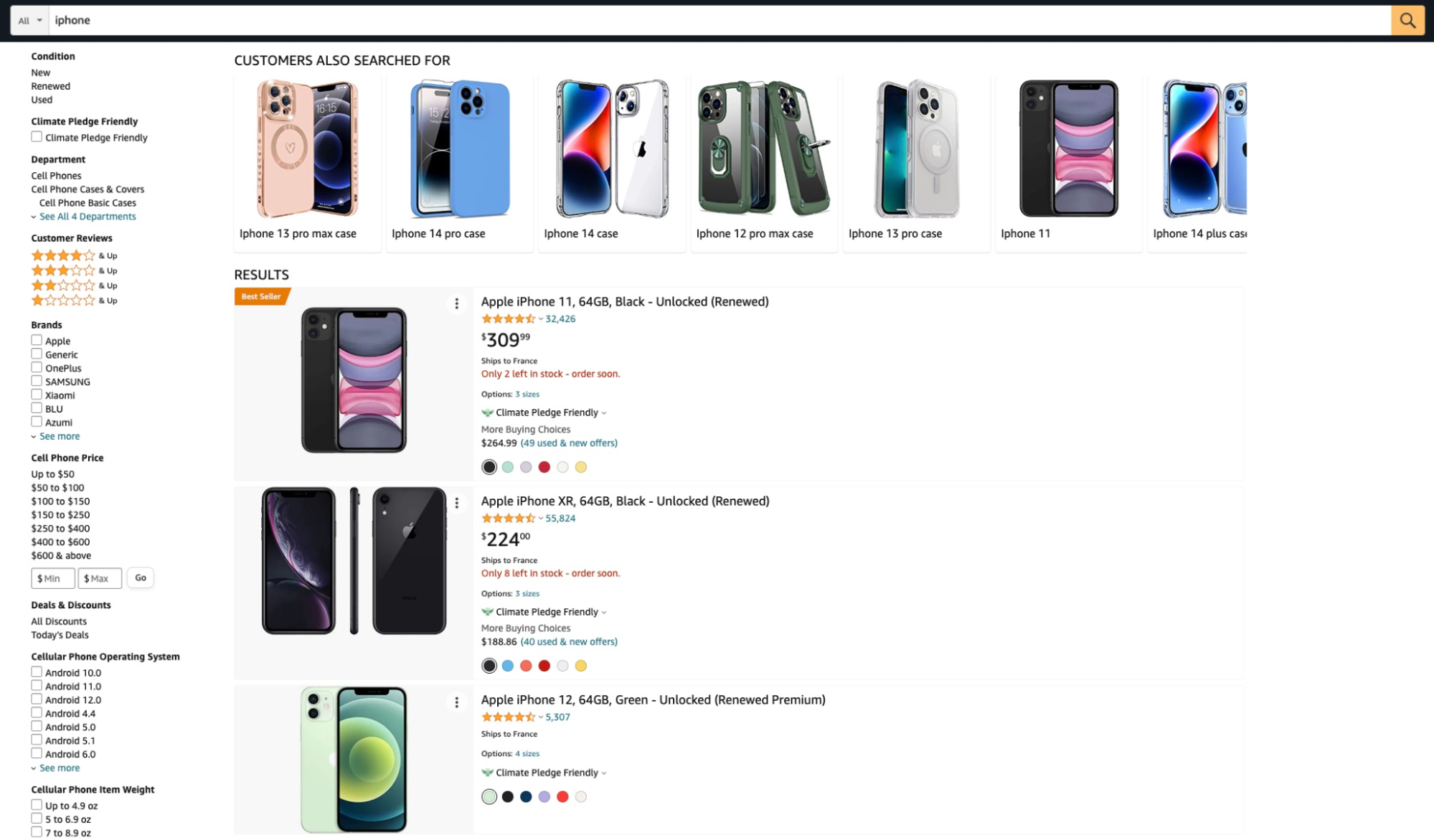

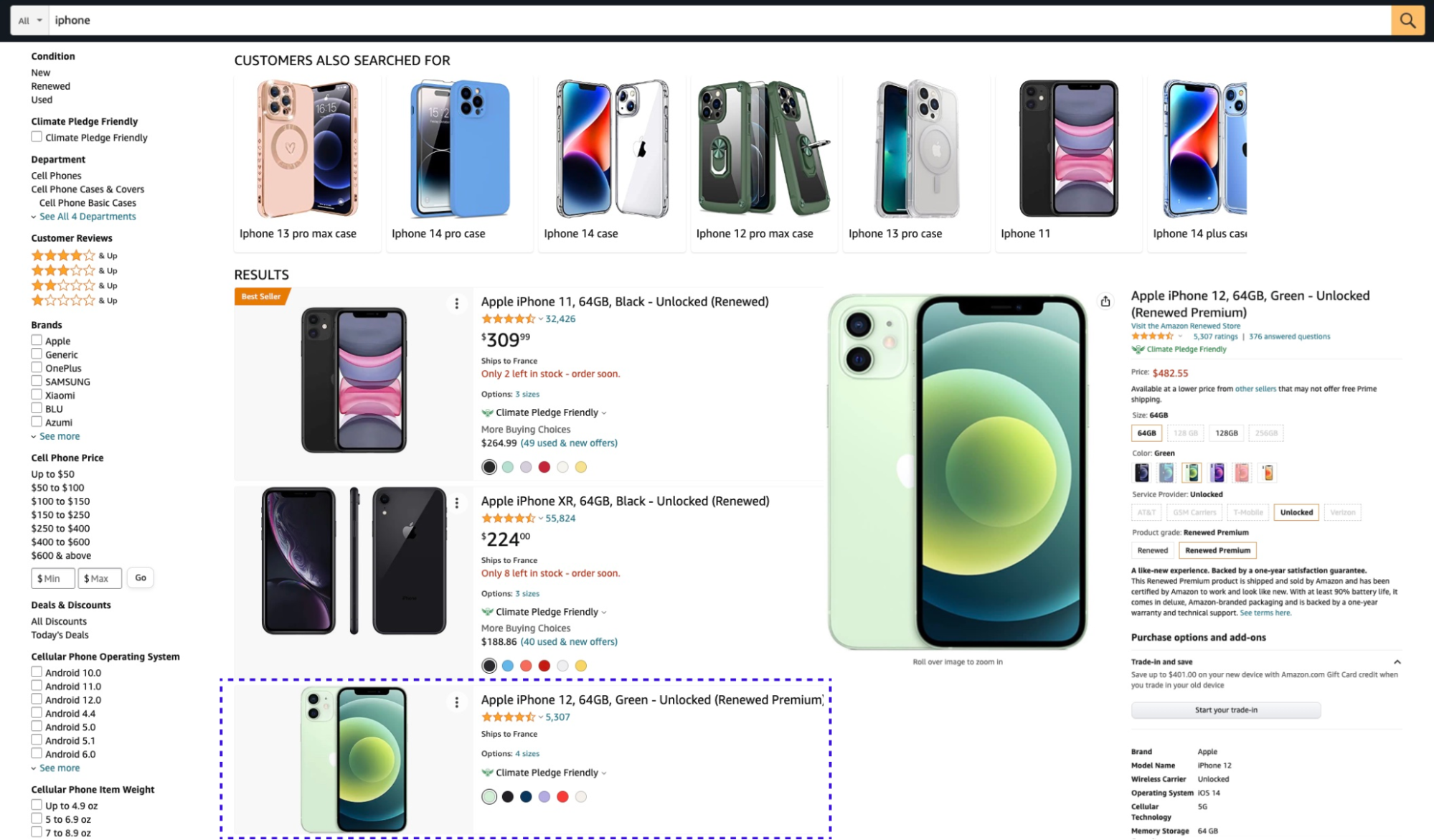

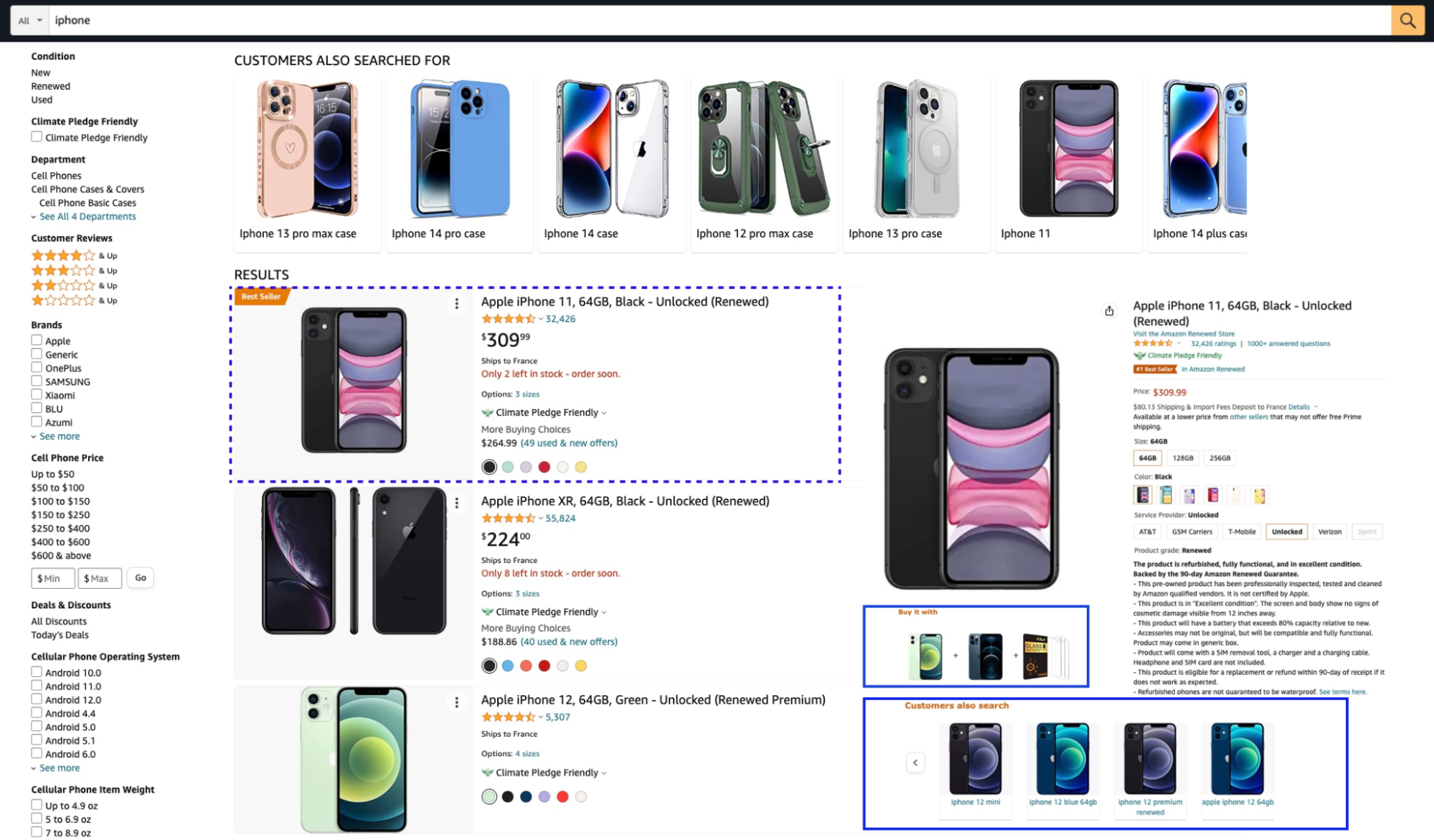

Classic ecommerce search returns a list of products with short descriptions, images, price, etc. Using that information, users can choose to view or buy an item, or change their search. Most ecommerce websites provide faceting and filtering and recommendations of related items. Amazon provides the model for most ecommerce businesses.

This is what classic search looks like.

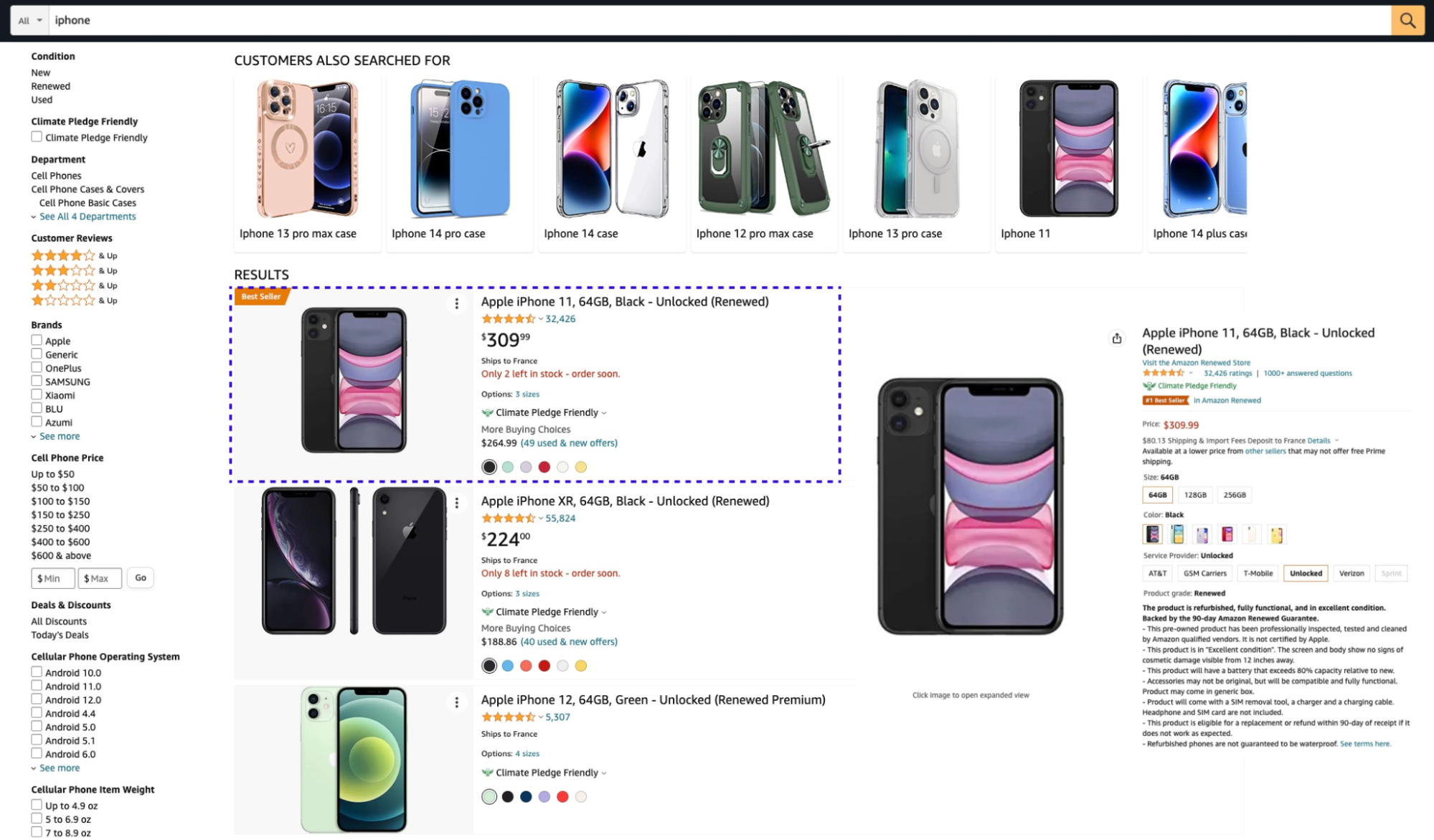

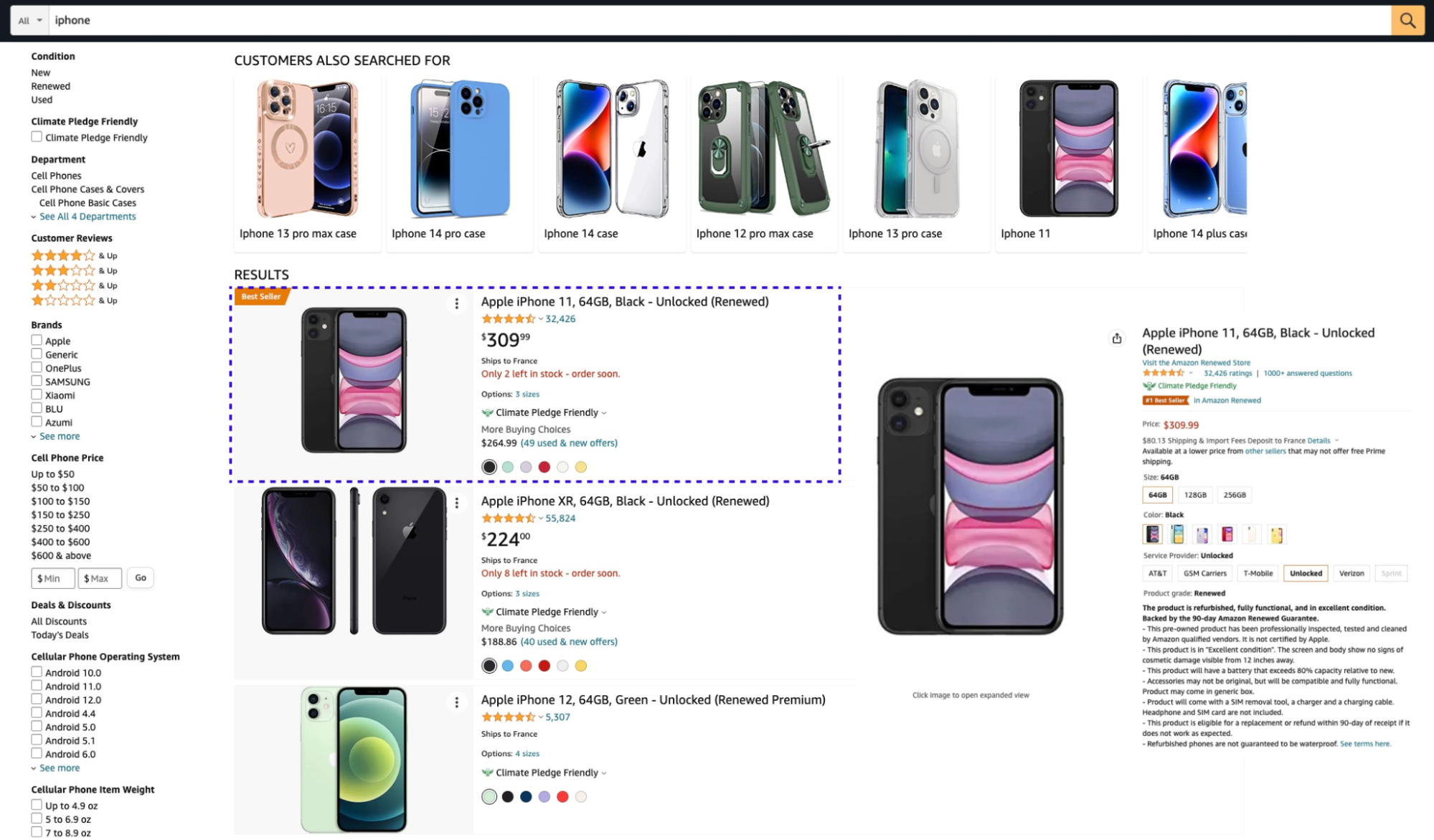

But you can also add knowledge to the classic search experience. The right side was empty, so we added some additional information:

We’ve added an informative snapshot of the first result. It contains a summary of the phone’s details, ratings, shipping information, options, and more. You can also click on the item’s options or see the reviews.

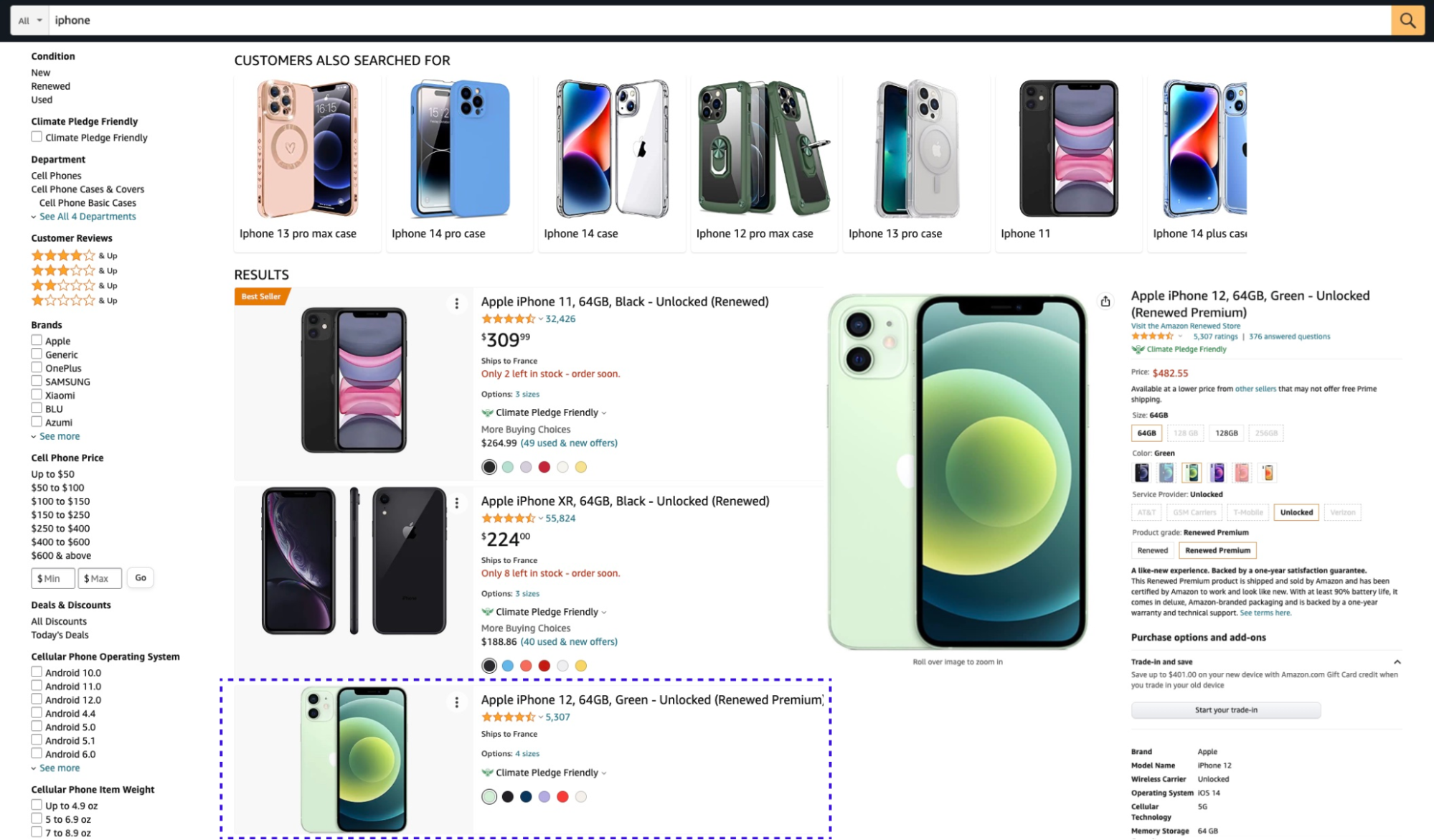

And as you scroll down the search results, you can see previews of each item:

You’ll want to design the experience well, because too much knowledge can start to clutter up your screen. For example, adding item-based accessories and recommendations is important; but it needs to be designed well:

Semantic search – Questions & Answers + Content

What about creating a more natural, semantic search?

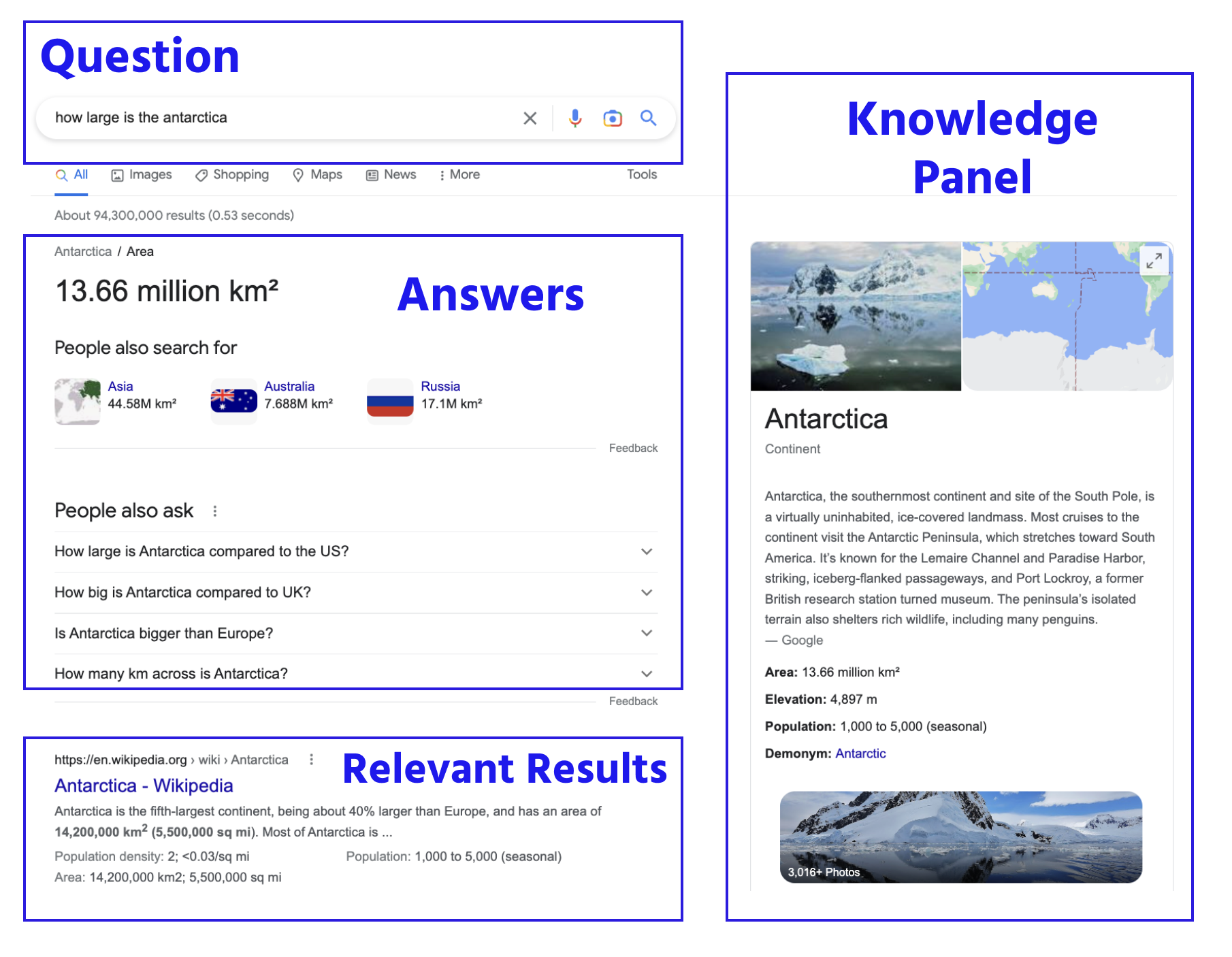

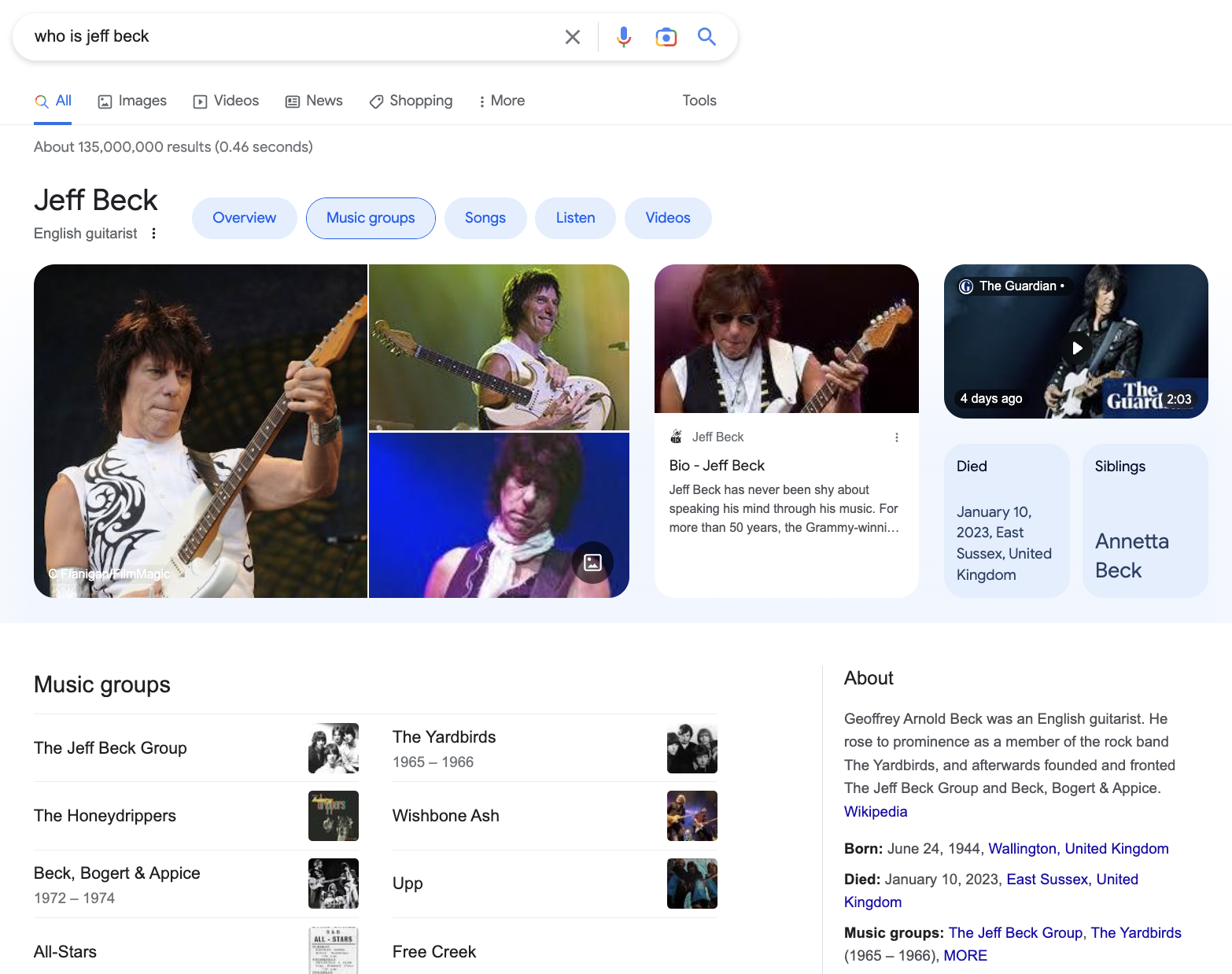

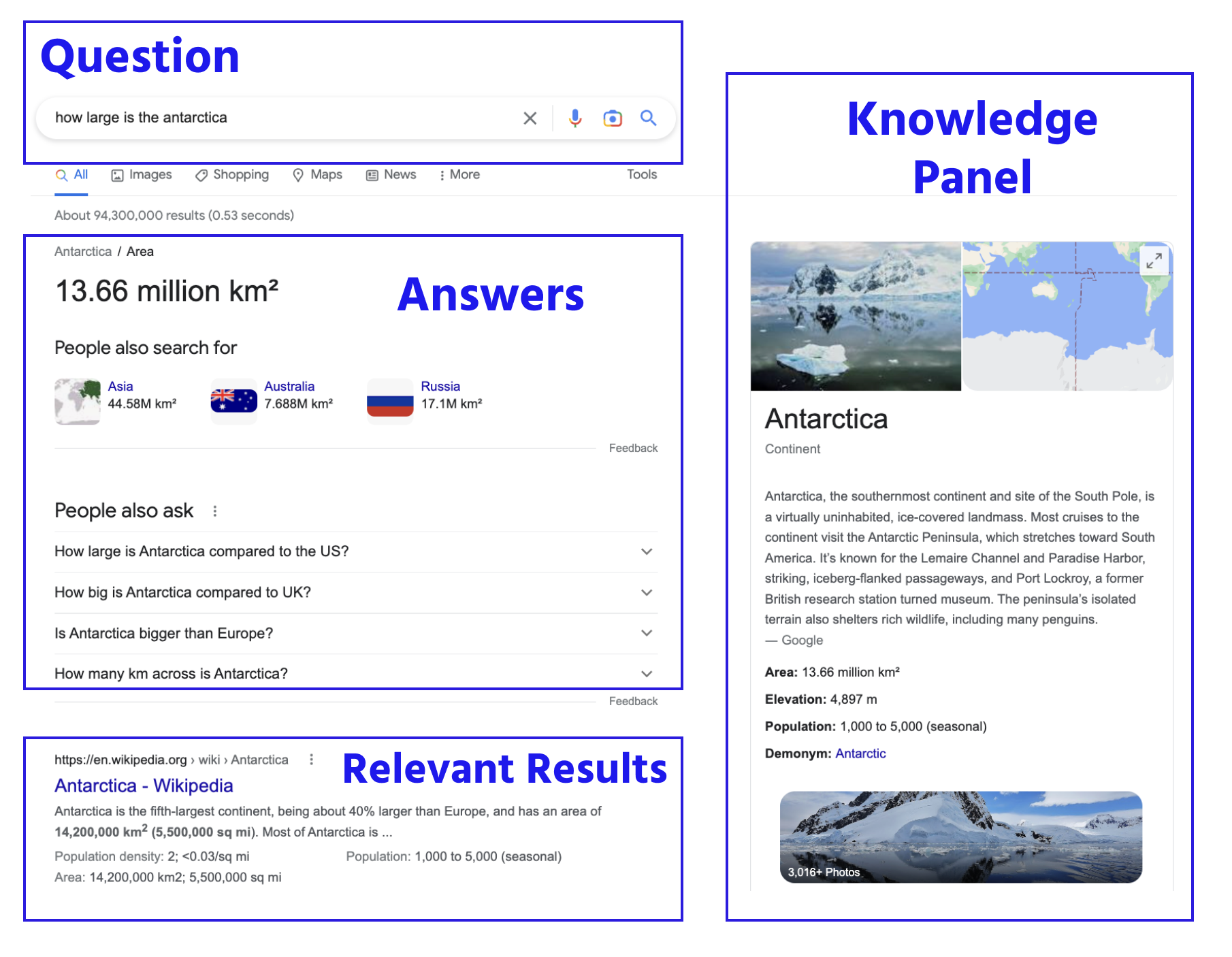

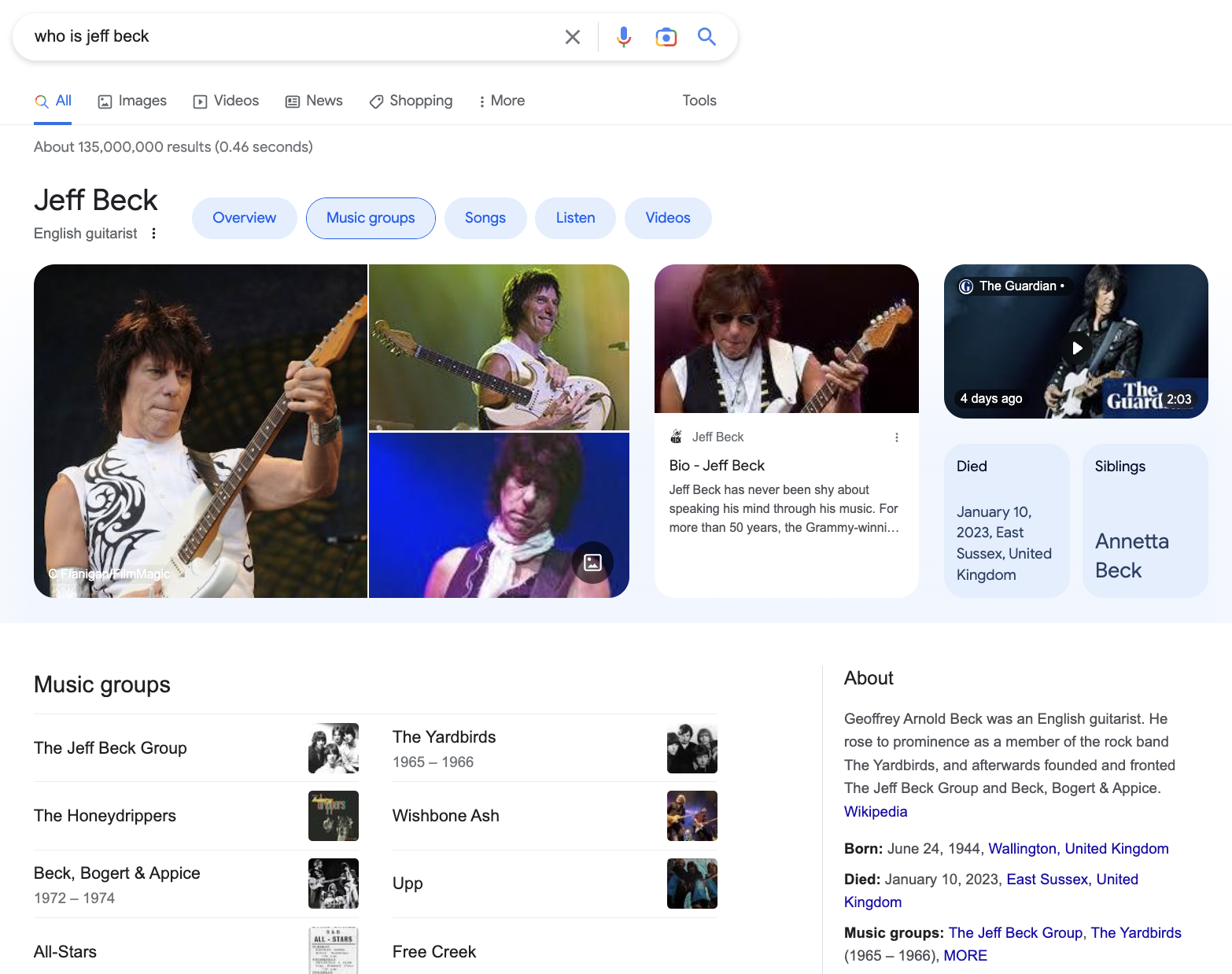

Google has changed our expectations with its knowledge panel, and its ability to answer a question and propose related questions:

There’s a lot going on here: Google answers the question (“how large is the Antarctica?”), and it proposes other questions. It also compares the size of Antarctica to other countries and provides a summary of Antarctica with images and links to get more information about size, population, and history.

There’s really no reason to leave this screen, unless you want more information.

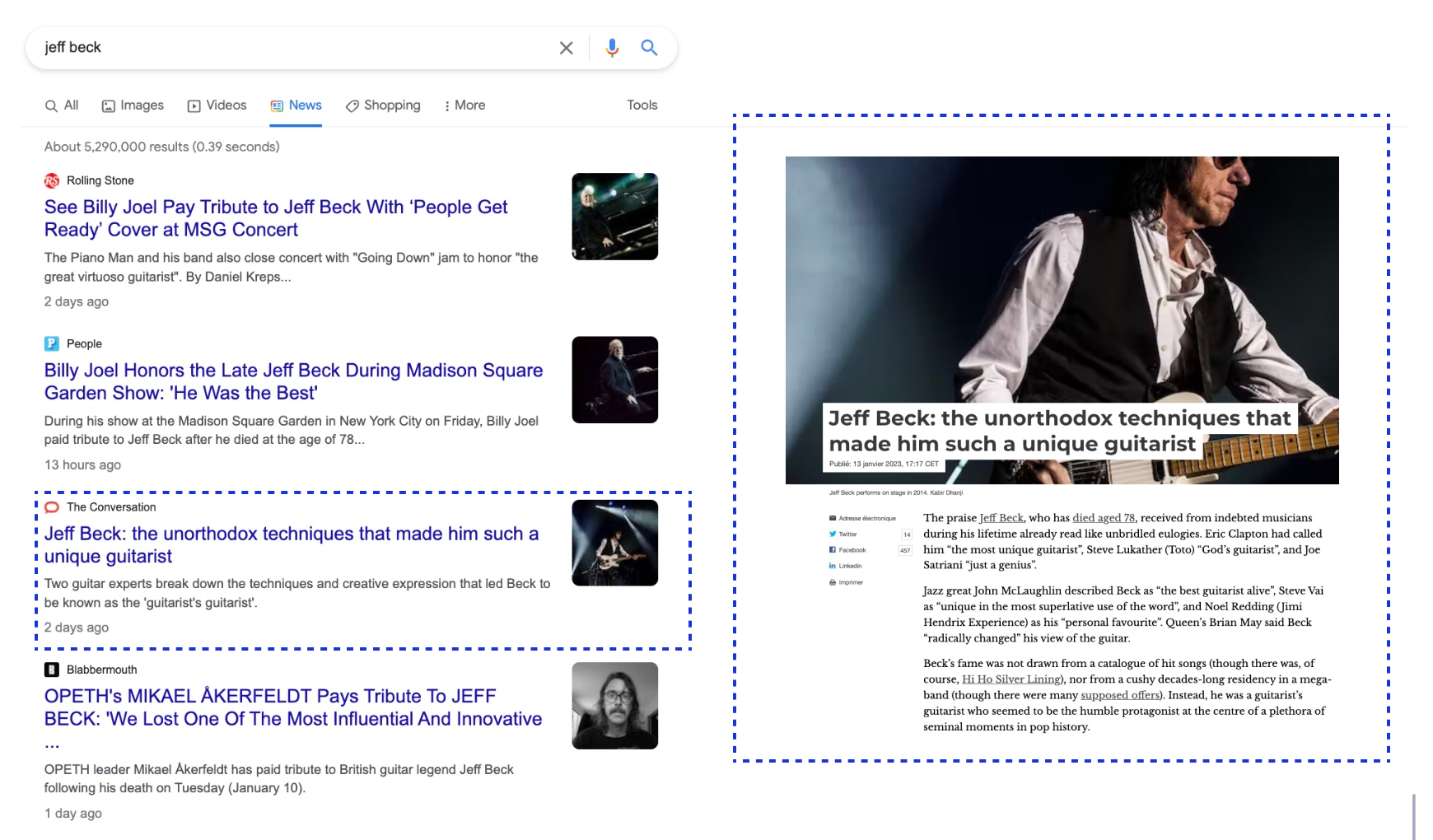

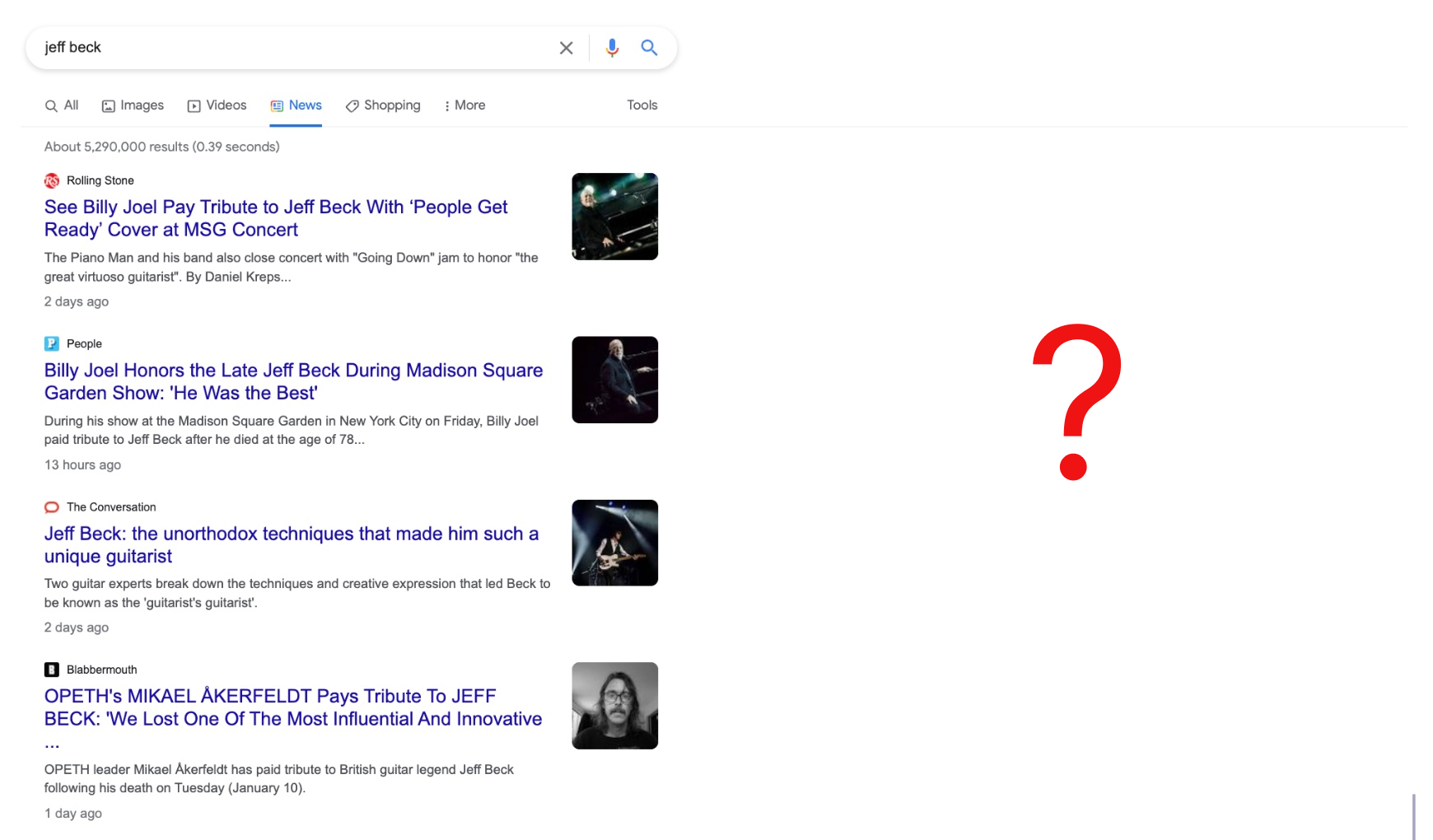

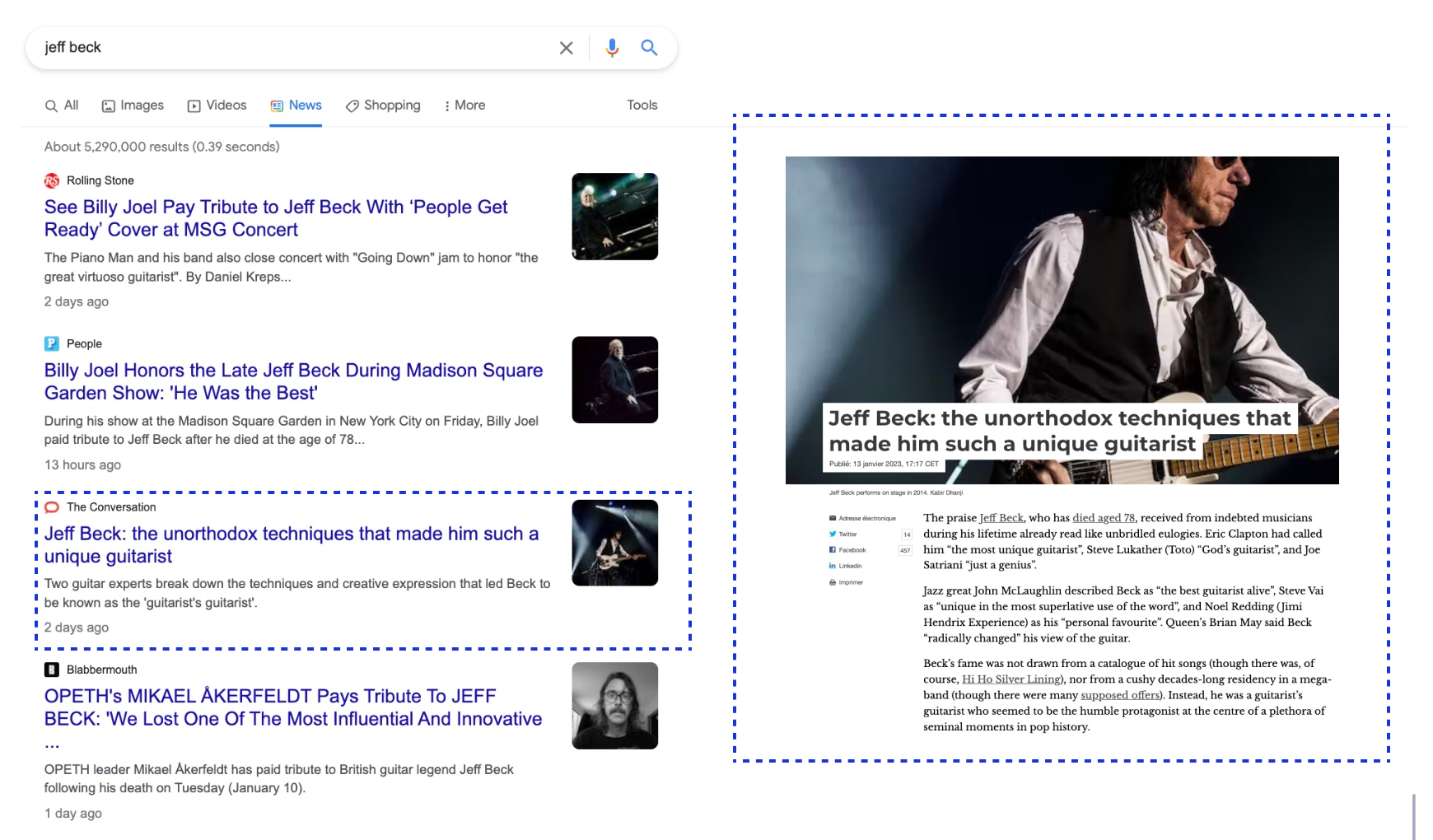

And Google shifts its design based on the topic. Artists get a different treatment. For example, a question (“who is jeff beck?”) returns photos, easy access to his art, and some biographical information:

Oddly, Google doesn’t provide the same knowledge for news, where you’d think it would be even more important to get at least the lead paragraph:

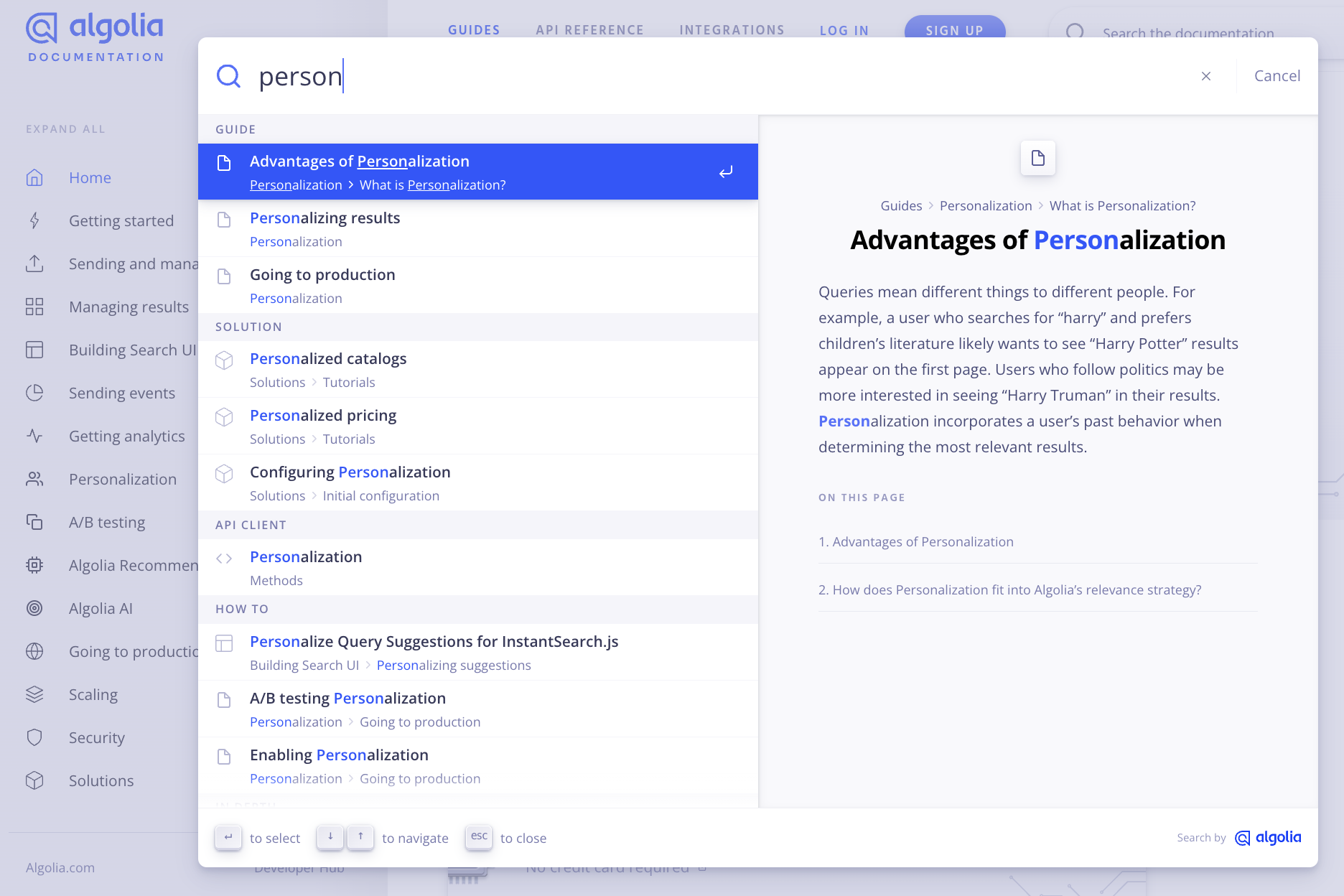

An information-driven website like a news journal, blog, or software documentation can add a snapshot of the content as the user scrolls down the results:

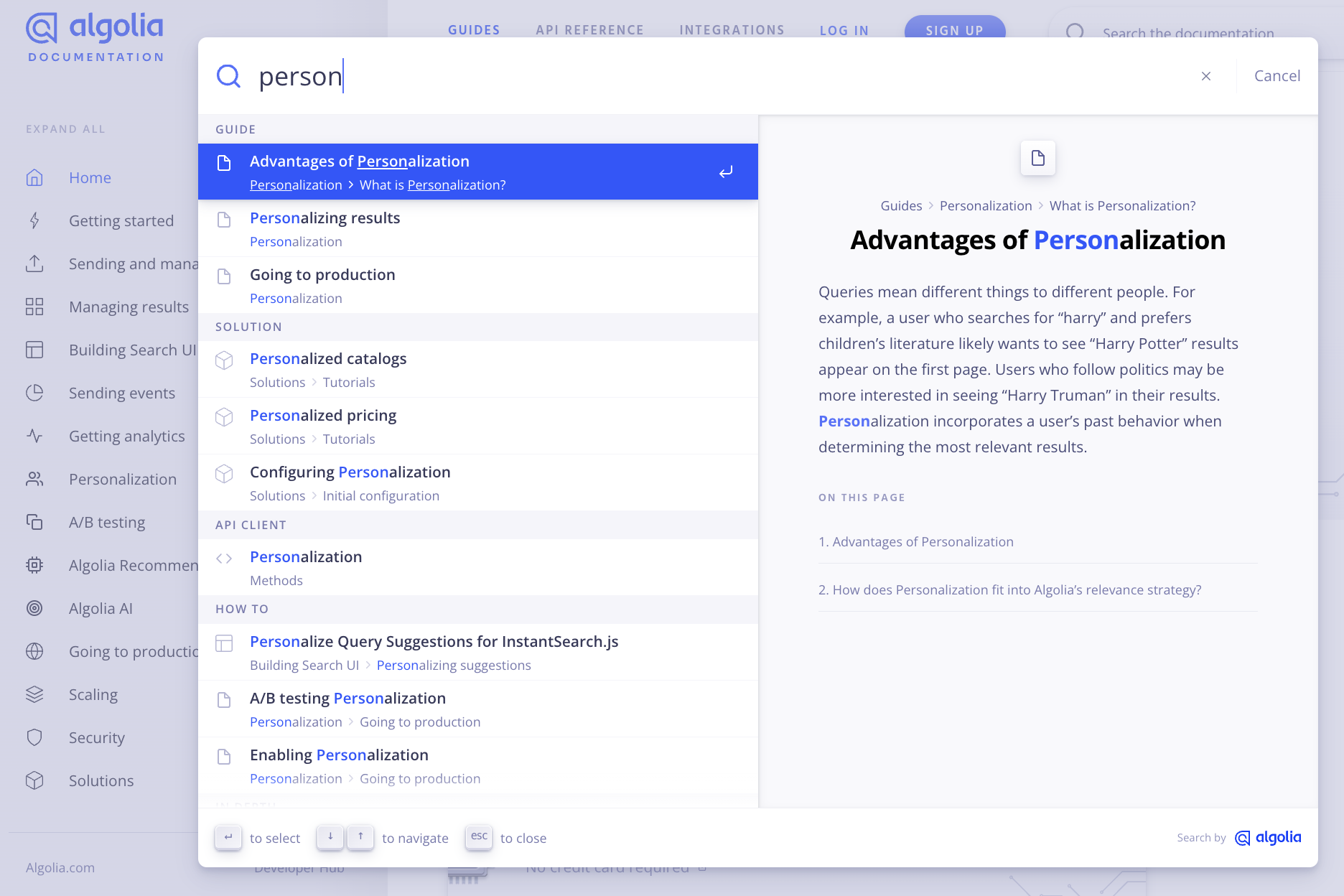

Finally, documentation websites often use the same technique – displaying a snapshot of the page. This saves the developer time by not having to sift through multiple pages until they find their answer:

In sum, knowledge-based search is all about presenting information in multiple ways, not just providing links.

Now let’s dig a bit into how the search engine makes this possible.

The back end – organizing the data into knowledge

For decades there has been a heated debate about the fact that a functioning knowledge management system is not something that can be installed in an intranet like any software system, and that knowledge cannot be stored in documents or databases. With the rise of Knowledge Graphs (KGs), many knowledge management practitioners have asked themselves the question whether KGs are just another database, or whether this is ultimately the missing link between the knowledge level and the information and data levels in the DIKW pyramid as depicted here.

— Andreas Blumauer, Founder & CEO at Semantic Web Company

We can add that Wisdom, in the context of enterprise and ecommerce knowledge management, is related to such intentions as making informed decisions, performing well, creating better marketing campaigns, and increasing customer satisfaction and online revenue.

From data to information to knowledge

Data needs context to give it meaning. Otherwise, it’s simply raw information, such as strings of characters (“A”, “a”, “B”, ..), numbers, and binary 0s and 1s representing true and false. A customer’s name is a string of data. It only becomes a meaningful string when someone searches for a customer’s name or wants to view their sales activity.

Back-end databases and applications provide the data; front-end search interfaces display that data as information and knowledge.

The process & algorithms

To get data from the back end to the front end, engineers and data scientists need to build a data pipeline that feeds the middle layer. The middle layer provides API access to the front end, enabling the front-end engineers to build a federated search interface.

We’ll look at two middle-layer datasets:

- A search index

- A knowledge base (of AI/machine learning vectors or a knowledge graph, as we’ll see below)

The search index – for classic search

Most online companies use a cloud-based search index for their search functionality. A search index is made up of a subset of relevant information, such as searchable keywords and metadata (title, brand, color, price, etc.), and longer free-text descriptions. It also contains information used for displaying, sorting, and filtering the information on the search interface.

When dealing with multiple data sources (e.g., product catalog, customer data), the engineers need to create a “data pipeline” that merges the various data sources into one search index.

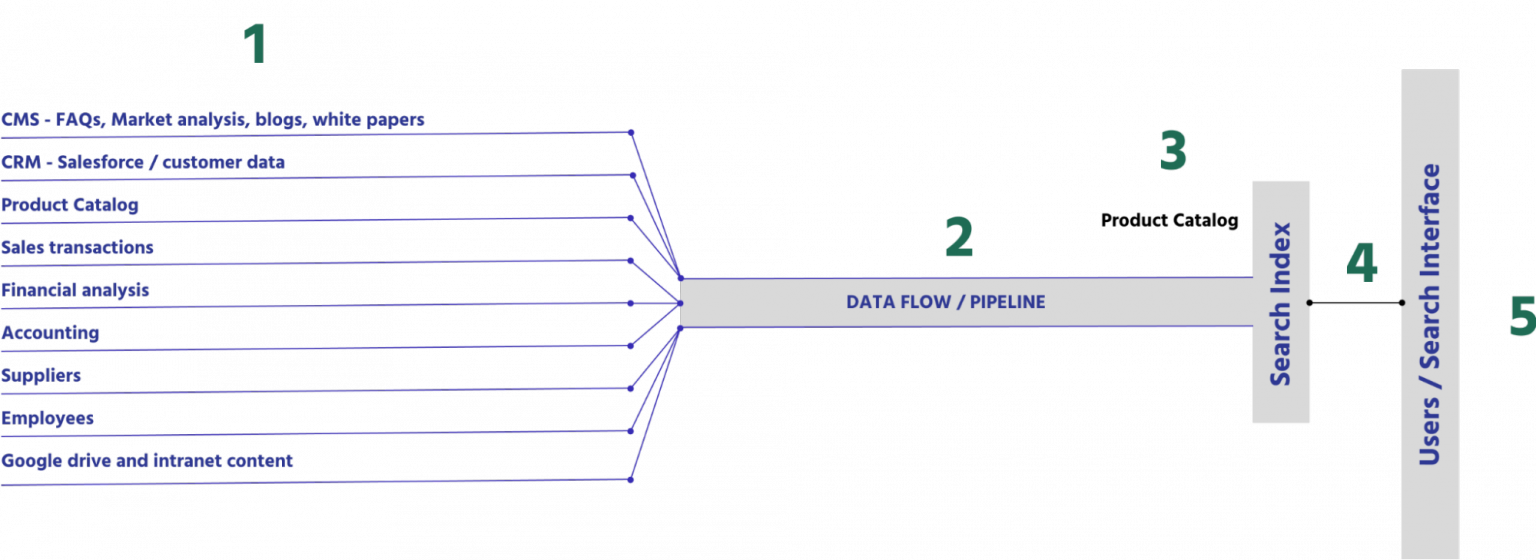

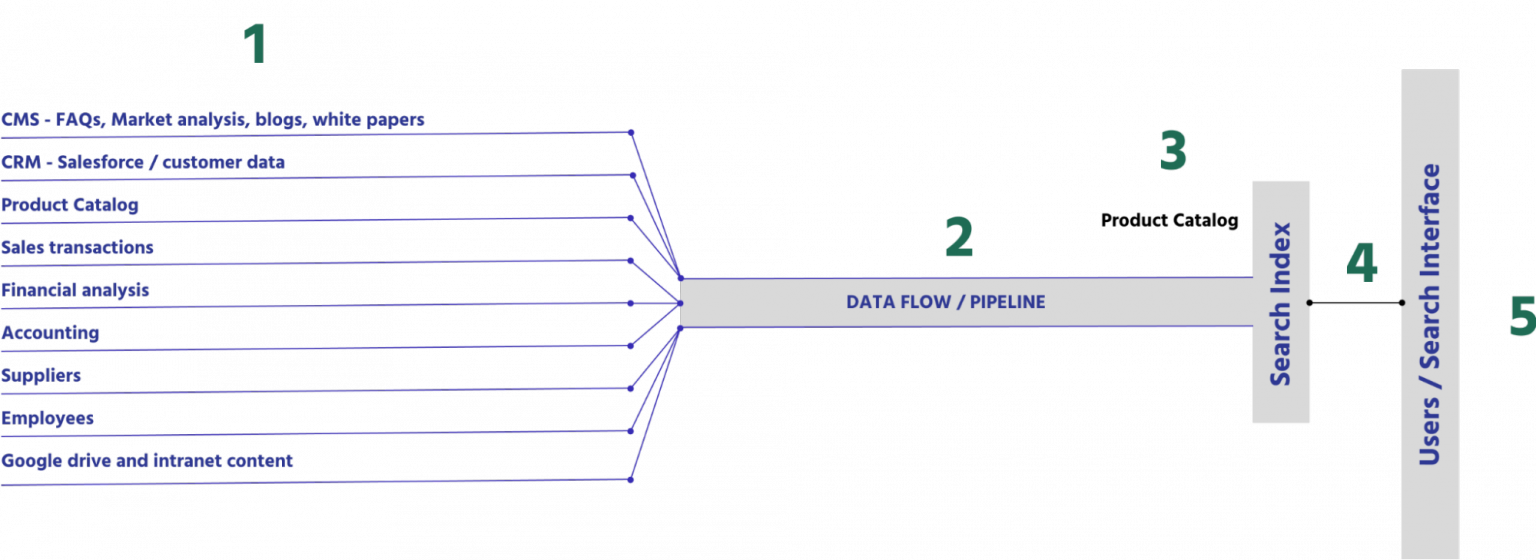

Here’s a picture of the flow between the back end applications to a single search index:

- On the far left, you have a number of typical back-end systems that provide important information for different uses, such as employees seeking information to do their jobs, customers searching through products, and marketers and business partners managing their product catalogs.

- The data flow, called a pipeline, takes a subset of relevant information from each system and performs a merge, which includes reformatting and structuring the data sources for searchability. It is this process that builds the search index.

- The search index contains a large set of structured data that the search engine uses to execute all queries and display all relevant information to the user.

- The search API executes all search requests, returning a set of search results to be displayed on the search interface

- Finally, on the right, you have the search interface itself – the results, facets, recommendations, and other pieces of information for the user to interact with.

The knowledge base – adding knowledge and answers to questions

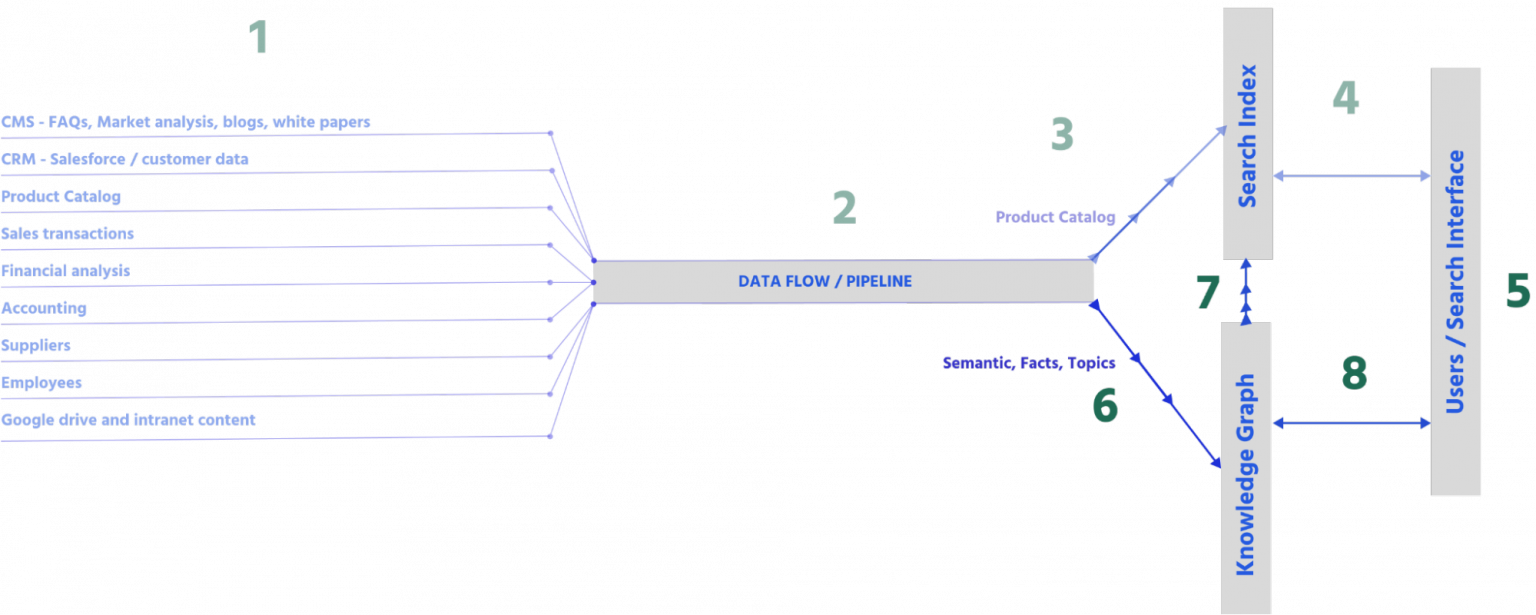

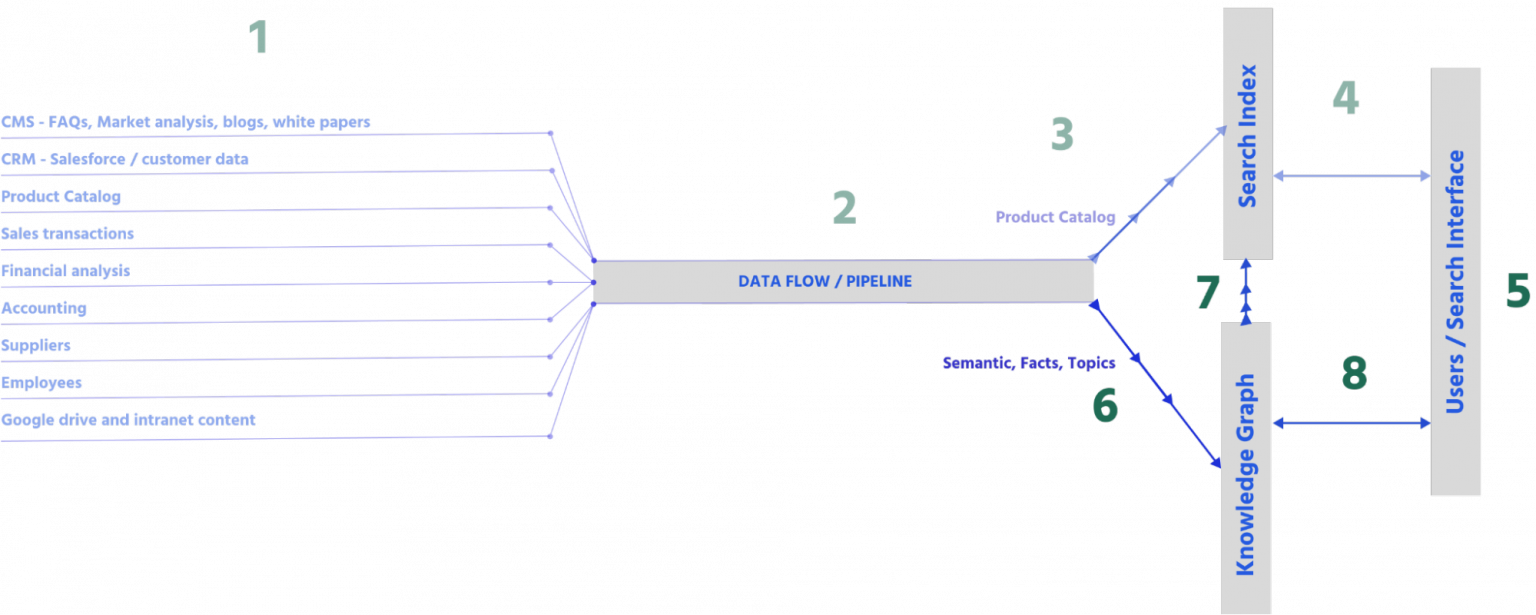

Knowledge management, which includes question answering, comes from a second middle layer of data:

- Knowledge management changes the interface to display knowledge-based information with hyperlinks akin to Google (see examples in the previous section). It also displays answers to questions (see the “who is jeff beck” example above).

- When adding knowledge, the data pipeline process and algorithm must adapt. Before, it only fed the search index; now, there’s an additional process that maps company data into a knowledge base. Additionally, because knowledge requires more related information, the data pipeline needs to do more than just format and structure the data, its algorithms need to rework the data to build vector-based classifications and/or a knowledge graph. We’ll go into more detail below on how to build a knowledge base.

- Once the knowledge base is completed, it may also send some summary data to the search index, with links to the full information. This is done because accessing the search index will usually be faster, and its keyword search is best situated to manage the whole search execution process, especially the relevance. This too is discussed in the next section.

- And, last but not least, the front end now makes two search requests in parallel: one to the search index and the other to the knowledge graph. This should be asynchronous, where the search results appear first and immediately, and the extra knowledge appears immediately after. This delay is often not detectable.

And there you have it – the perfect example of transforming company data into relevant user knowledge. Of course, the devil is in the details, and there are multiple ways to architect this, but this should give a general idea of what’s involved.

Storing knowledge with knowledge graphs and/or machine learning

There are many ways to store knowledge. It can be stored in one search index, or in formats such as vectors and knowledge graphs. We’ll discuss the latter two.

Machine learning & Semantics with neural networks, vectors, and language models

For many years search engines have predominantly relied on keywords, much like the indexes you find in the back of books. Unless a query matches a keyword in your index, the search engine can come up empty-handed. While the concept of “matching” has traditionally powered search engines, a major shift from “matching” to “understanding” is currently underway. This is being driven by AI, which is used to represent text mathematically such that it can be conceptually understood by machines. Concepts are taking over from keywords and it’s great news for everyone.

– Hamish Ogilvy, VP, Artificial Intelligence at Algolia

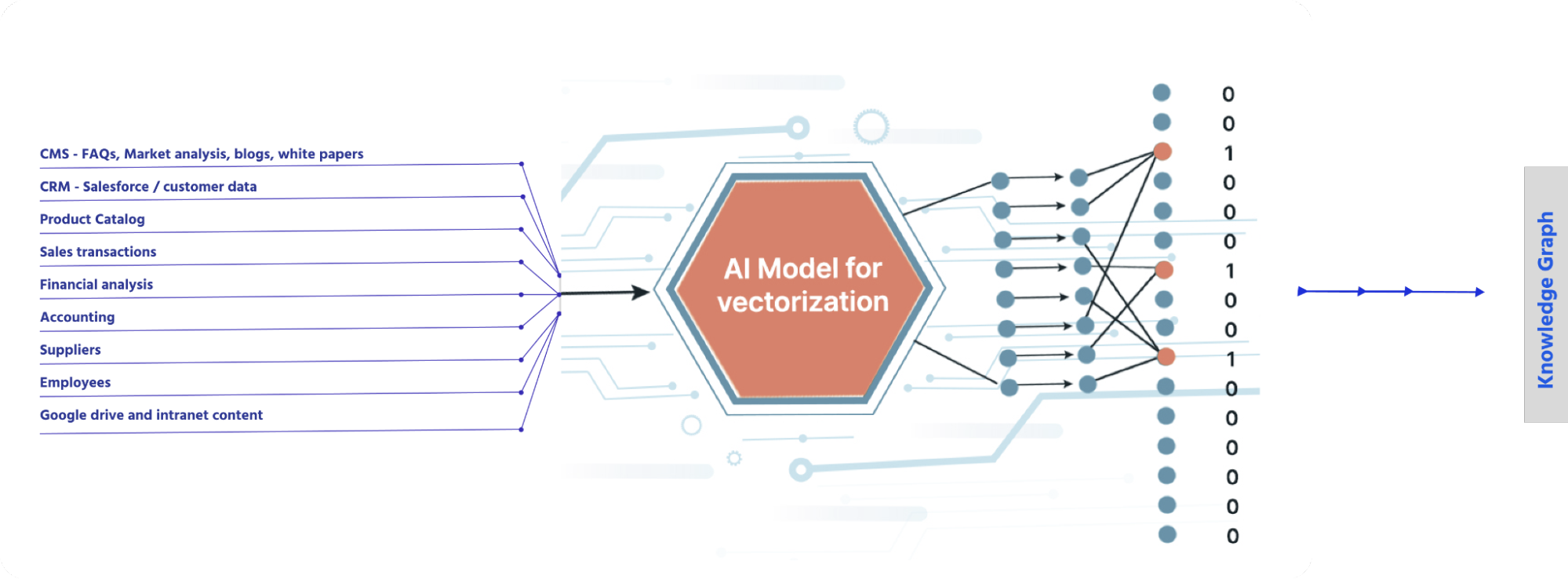

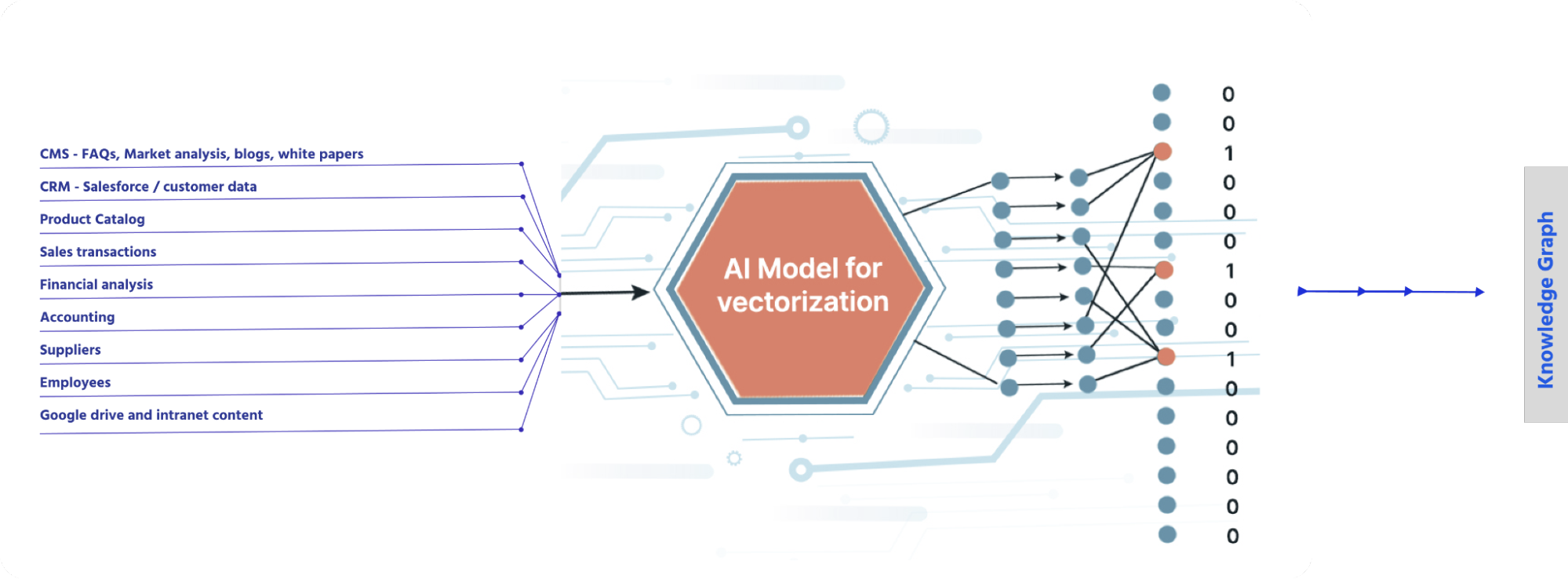

When machines “read” a lot (a very lot) of data, they self-teach themselves to understand, or give meaning to, the content. With automated self-learning, they can accomplish the following:

- Find similarities in words (detecting synonyms, word relatedness)

- Extract topics and hierarchies of topics from the texts

- Give context to create an even deeper understanding of the text, to enable inferences and deep knowledge

- Predict next words

- Generate content

Most ML technologies (like neural networks) create some form of a vectorized dataset. Vectorization is the process of converting words into vectors (numbers) which allows their meaning to be encoded and processed mathematically. You can think of vectors as groups of numbers that represent words. In practice, vectors are used for automating synonyms, clustering documents, detecting specific meanings and intents in queries, and ranking results. Vectorization is very versatile, and other objects — like entire documents, images, video, audio, and more — can be vectorized, too.

We can visualize vectors using a simple 3-dimensional diagram:

.jpg)

Image via Medium showing vector space dimensions.

You and I can understand the meaning and relationship of terms such as “king,” “queen,” “ruler,” “monarchy,” and “royalty.” With vectors, computers can make sense of these terms by clustering them together in n-dimensional space. In the 3-dimensional examples above, each term can be located with coordinates (x, y, z), and similarity can be calculated using distance and angles.

Machine learning models can then be applied to understand that words which are close together in vector space — like “king” and “queen” — are related, and words that are even closer — “queen” and “ruler” — may be synonymous.

Vectors can also be added, subtracted, or multiplied to find meaning and build relationships. One of the most popular examples is king – man + woman = queen. Machines might use this kind of relationship to determine gender or understand gender relationship. Search engines could use this capability to determine the largest mountain ranges in an area, find “the best” vacation itinerary, or identify diet cola alternatives. Those are just three examples, but there are thousands more!

What does AI have to do with knowledge management?

By finding word relationships and building factual and conceptual inferences, machine learning enables a system to synthesize a company’s unique knowledge. Google has invested millions of engineering hours for more than a decade to design its deep learning BERT language models and search infrastructure for internet search.

The same can be done in ecommerce, media, and other online services.

What is a Knowledge Graph?

In the history of search, there are many different approaches to search, for example, classic keyword search, which matches individual words or phrases based on textual matching or synonyms. Ontologies and knowledge graphs took that a step forward by adding topic matching, where items and documents that fall within the same topic or contain the same entities (not necessarily the same words) are also considered relevant to a query.

– Julien Lemoine, Co-founder & former CTO at Algolia

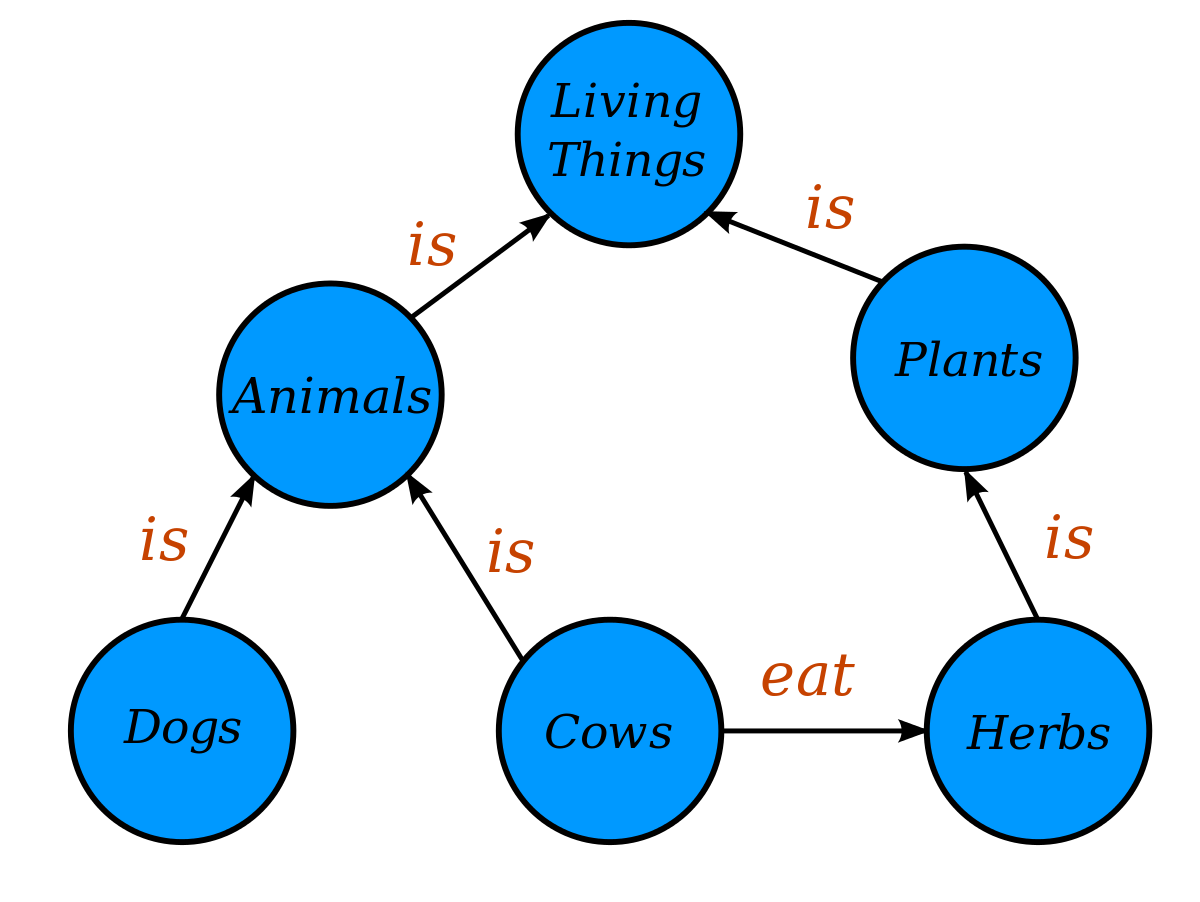

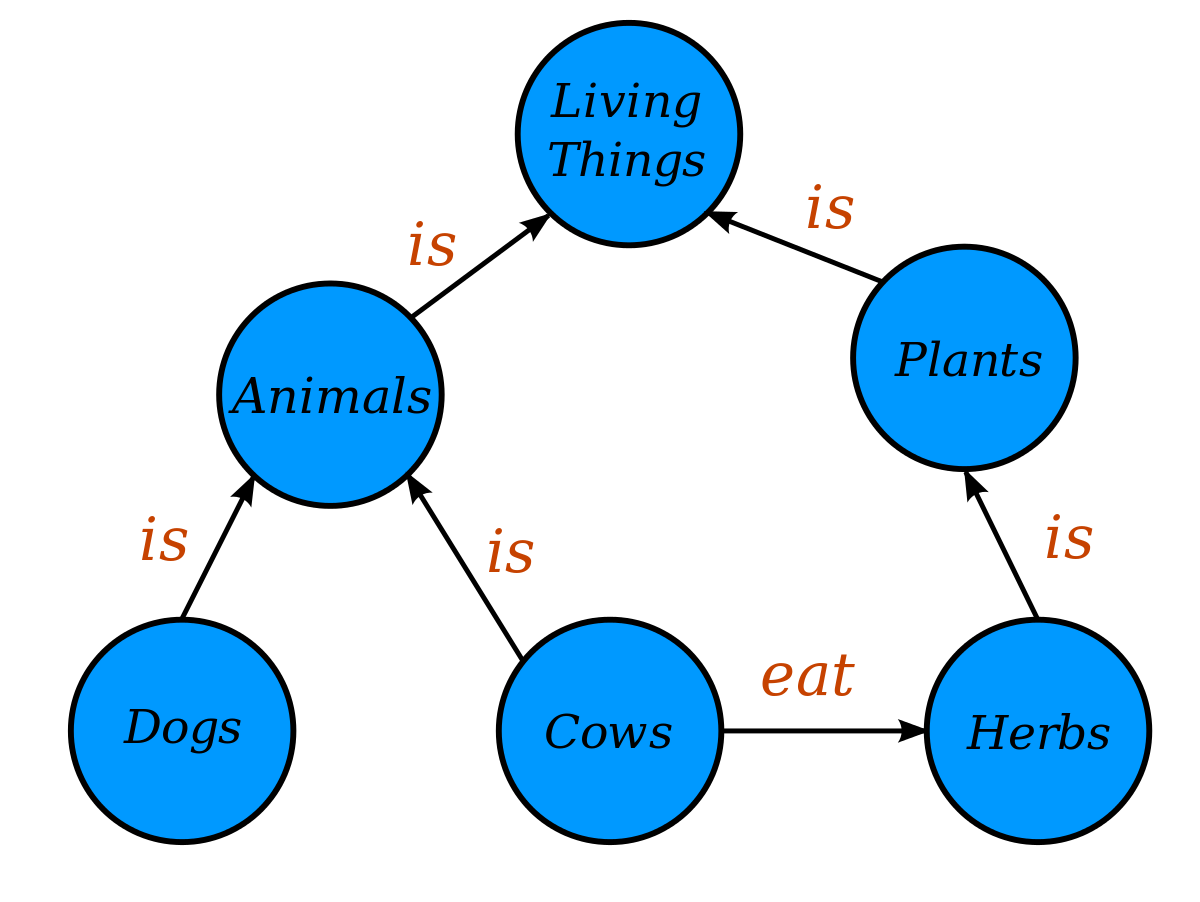

Let’s start with the “ontologies and hierarchies”. A knowledge graph contains “nodes” such as concepts, subject topics, hierarchies, and other such ways to classify information. It also contains “edges” that show relationships between the nodes.

Usually, the nodes are nouns and edges are verbs. We’ll use a simple example: “cows” are “animals”, “animals” are “living things”, and “cows” eat “herbs”:

You can see that living things are plants that eat. Even more importantly, this schema enables us to make inferences, such as what kinds of food living things need to eat.

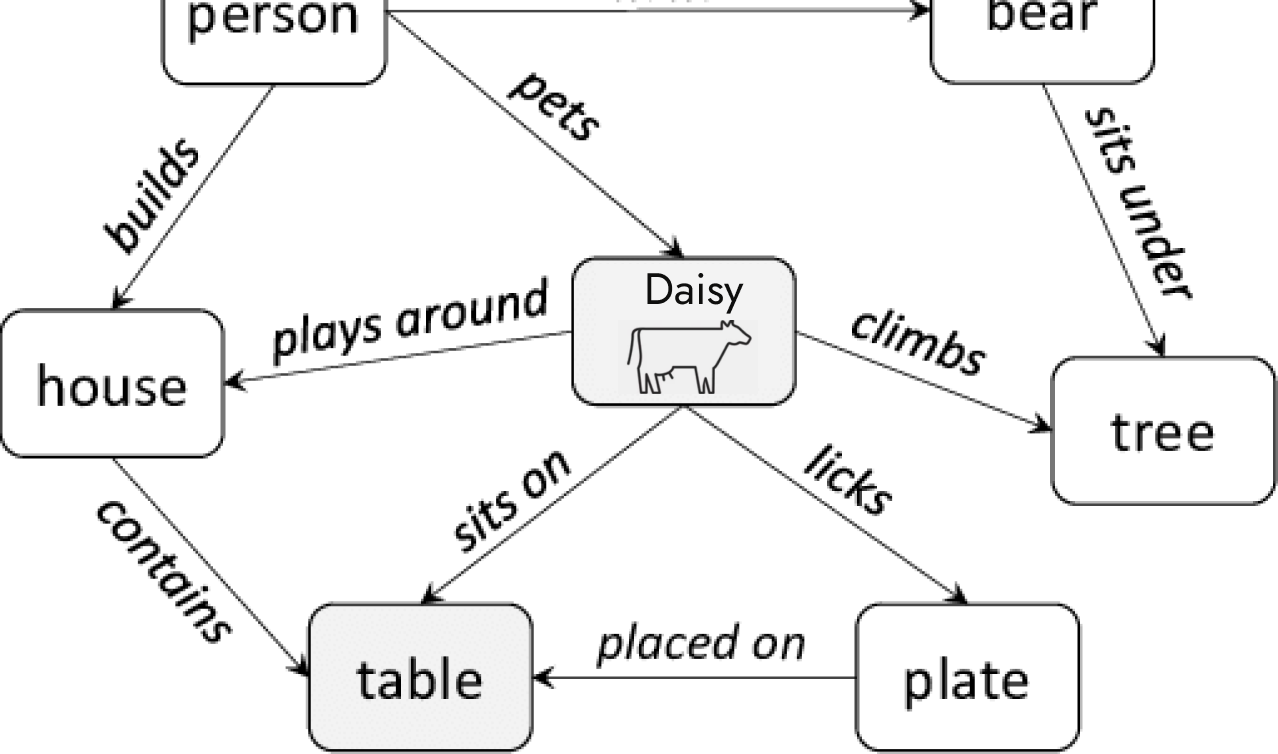

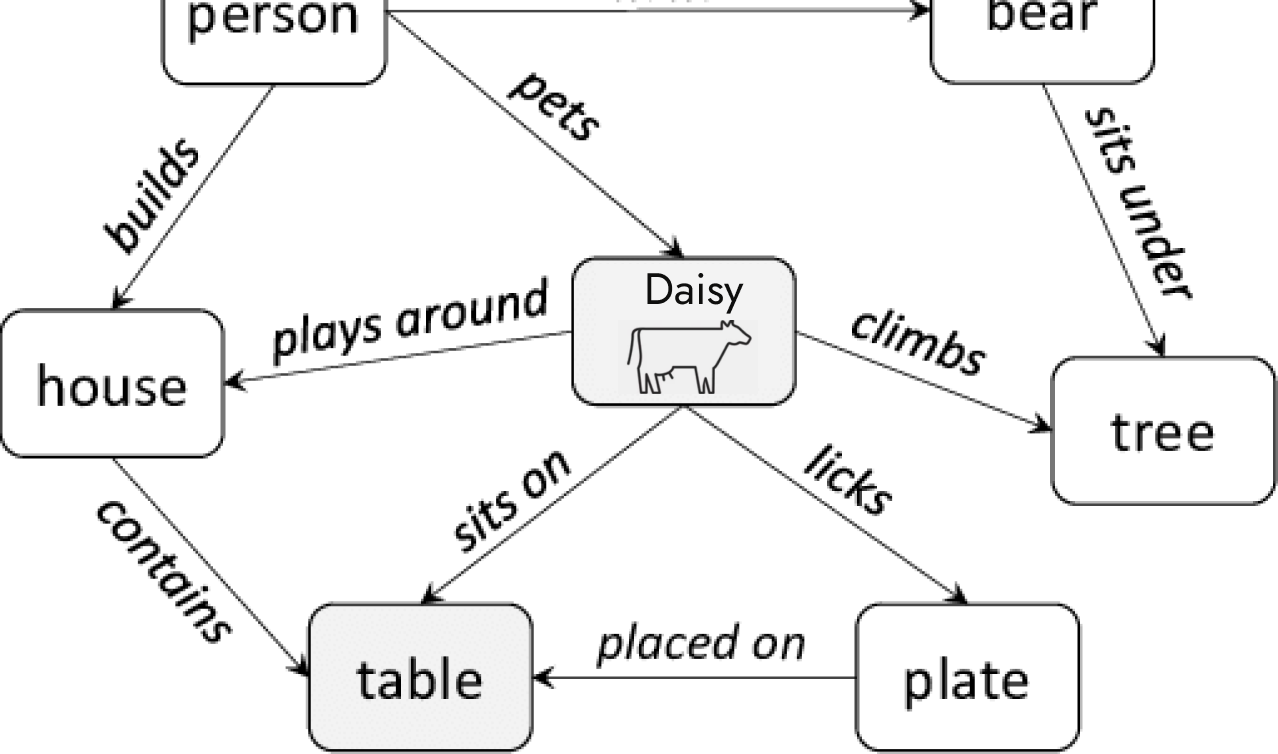

Once categories are in place, a company can distribute its real objects (products, finances, customers, and other facts) into the graph. For example, a specific “living thing” (Daisy) can be understood by its classifications and relationships to other facts and objects.

We can say that, as a living thing, Daisy needs to eat, and therefore, we can infer that some of its behaviors relate to eating. Thus, a search for “cow food” might not only show different brands of cow food, but may show knowledge about how cows find food, nutritional information, and more.

On a high level, that’s how a knowledge graph represents knowledge, with facts (nodes) and factual relationships (edges) and chains of inferences that provide a summary or tell a full story.

It’s important to understand some of the challenges with knowledge graphs:

- For large scale enterprises, the sheer amount of facts and relationships a knowledge graph needs to be successful can be daunting. If there are any holes in its knowledge, or wrong information, the graph becomes unusable and could be risky to rely on if important data is misleading.

- Information changes and gets outdated or goes stale; old data might be wrong or misclassified; new data sometimes requires rethinking the old concepts and relationships; and so on.

- A knowledge graph normally relies on tedious manual entry by experts in the field to reinforce the quality and quantity of data. It requires a great amount of their precious (and expensive) time to enter everything right.

Essentially, for a knowledge graph to be useful, and not incomplete or inaccurate, it needs a lot of data and ongoing maintenance. But one way to contain that is to focus on smaller goals. For example, an ecommerce company can limit the breadth of its content by creating a small knowledge graph for its online services, including knowledge about products, sales, marketing, and customers. On the other hand, the same ecommerce company can create a second larger knowledge graph for its employees. But the latter might be best served by semantics and vectorization.

Worth mentioning: Semantics and knowledge graphs can work together. A knowledge graph can feed a vectorized classification, so that the middle layer can combine the classic search index with a robust, automated AI index based on powerful language models like LLMs and GPT.

What’s now, what’s next

Multiple results, deep knowledge, answers to questions – all on one search interface. Chat with us if you want to know what’s happening now, what’s coming next, and how to build your own knowledgeable federated search experience.

.jpg)